Enterprise AI Assistants for Data Leaders: Architectures and Implementation

Enterprise AI assistants represent a significant opportunity for Chief Data Officers to reshape data operations, improve decision-making capabilities, and create new sources of business value. This comprehensive guide explores how CDOs can use custom GPTs, intelligent automation, and enterprise-grade AI assistants to transform their data organisations. From automated data analysis to intelligent reporting and predictive insights, we examine practical strategies for implementing AI assistants that scale with enterprise requirements while maintaining security, governance, and compliance standards.

The AI Assistant Opportunity for Data Leaders

The emergence of sophisticated AI assistants and custom GPT technologies has created unprecedented opportunities for Chief Data Officers to transform how their organisations interact with data, generate insights, and make decisions. Unlike previous generations of business intelligence tools that required specialised technical skills, modern AI assistants can democratize data access while maintaining enterprise-grade security and governance standards.

Recent industry analysis indicates that organisations implementing enterprise AI assistants report 40% improvements in data analysis productivity and 60% reduction in time-to-insight for business stakeholders [1]. These improvements are particularly significant for data organisations that have struggled to scale their analytical capabilities to meet growing business demands while maintaining data quality and governance standards.

For CDOs, AI assistants represent more than just efficiency improvements. They offer the potential to fundamentally transform the relationship between business users and data, enabling self-service analytics capabilities that reduce bottlenecks while improving data literacy across the organisation. The technology also provides opportunities to automate routine data operations, freeing data professionals to focus on higher-value strategic initiatives.

However, implementing enterprise AI assistants requires careful consideration of technical architecture, governance frameworks, and organisational change management. Unlike consumer AI tools, enterprise implementations must address complex requirements around data security, regulatory compliance, integration with existing systems, and scalability to support large user populations.

This guide provides CDOs with comprehensive frameworks for evaluating, implementing, and scaling enterprise AI assistant capabilities. We examine the technical considerations, governance requirements, and organisational strategies necessary for successful implementation while exploring the transformative potential of these technologies for data-driven organisations.

The stakes for getting AI assistants right are significant. Organisations that successfully implement these capabilities can achieve sustainable competitive advantages through improved decision-making, enhanced operational efficiency, and accelerated innovation. Conversely, those that fail to capitalize on these opportunities risk falling behind competitors who use AI assistants to improve their data operations.

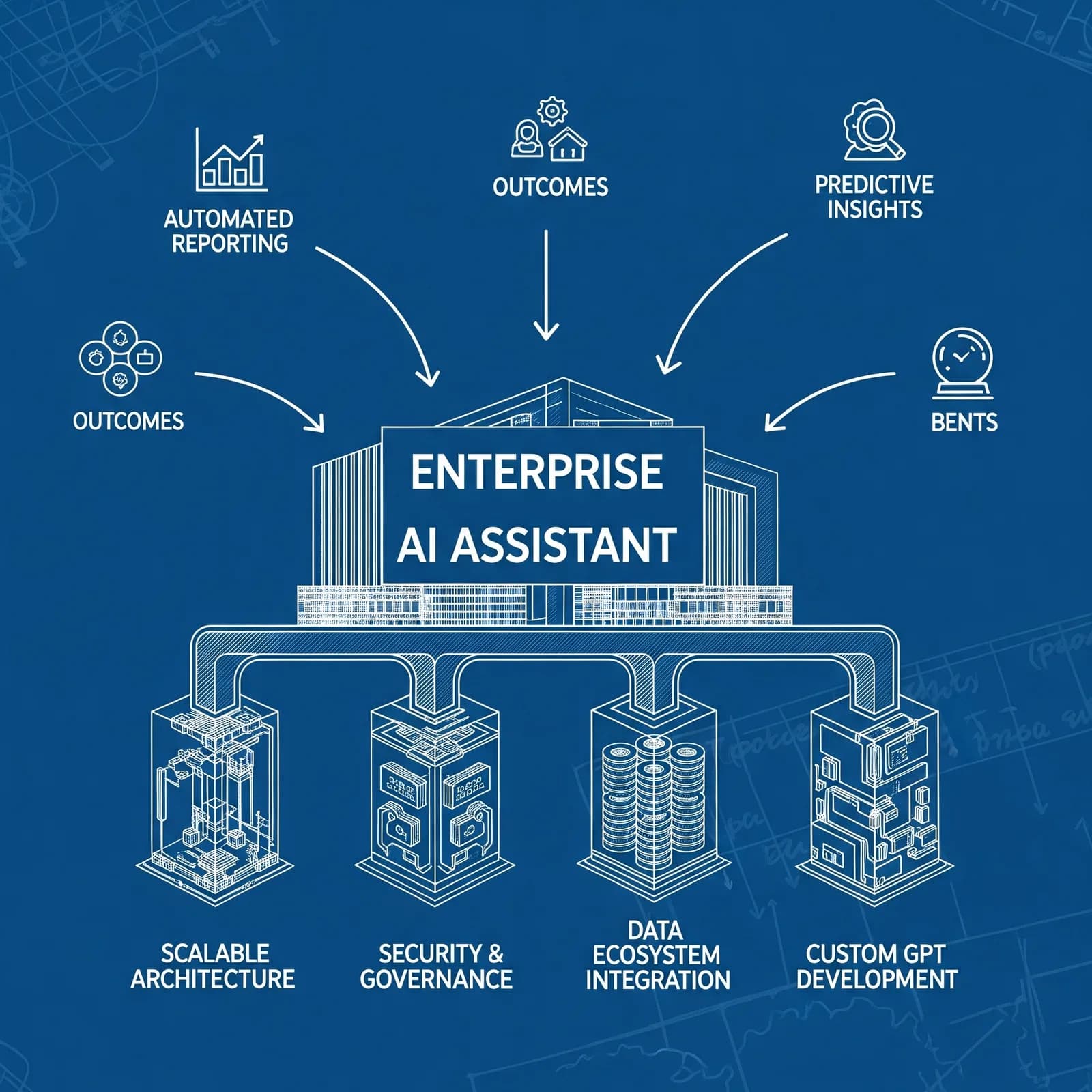

Understanding Enterprise AI Assistant Architectures

Enterprise AI assistants require sophisticated architectures that balance user accessibility with enterprise requirements for security, scalability, and governance. Unlike consumer AI applications, enterprise implementations must integrate with complex data ecosystems while maintaining performance, reliability, and compliance standards that support mission-critical business operations.

Technical Architecture Considerations

The foundation of successful enterprise AI assistant implementations lies in robust technical architectures that can handle the scale, complexity, and security requirements of large organisations. These architectures must support multiple use cases, user populations, and data sources while maintaining consistent performance and reliability.

Multi-Tenant Architecture Design is essential for enterprise AI assistants that serve diverse user populations with different access rights, data requirements, and security constraints. The architecture must provide isolation between different user groups while enabling efficient resource utilisation and centralised management capabilities.

Effective multi-tenancy requires sophisticated identity and access management systems that can authenticate users, authorize access to specific data sources and capabilities, and maintain audit trails of all interactions. These systems must integrate with existing enterprise identity providers while supporting fine-grained access controls that align with organisational data governance policies.

The architecture must also support customisation capabilities that allow different user groups to access tailored AI assistant experiences while maintaining centralised management and governance. This customisation might include different user interfaces, specialised knowledge bases, or custom analytical capabilities that align with specific business functions or roles.

Scalable Infrastructure and Performance Optimisation becomes critical as AI assistant usage grows across the enterprise. The infrastructure must handle varying workloads, from simple query responses to complex analytical tasks, while maintaining consistent response times and availability standards.

Cloud-native architectures provide the scalability and flexibility necessary for enterprise AI assistants, enabling automatic scaling based on demand patterns and providing access to specialised AI services and capabilities. However, these architectures must be designed with appropriate security controls and data residency requirements that align with enterprise policies and regulatory requirements.

Performance optimisation requires careful consideration of both computational resources and data access patterns. AI assistants that provide real-time insights must have optimised access to frequently queried data sources, while more complex analytical tasks may use batch processing capabilities to manage resource utilisation effectively.

Integration with Enterprise Data Ecosystems represents one of the most complex aspects of enterprise AI assistant implementation. These systems must connect with diverse data sources, including data warehouses, operational databases, cloud storage systems, and external data providers, while maintaining data quality and consistency standards.

The integration architecture must support both real-time and batch data access patterns, enabling AI assistants to provide immediate responses to simple queries while supporting complex analytical tasks that require processing large datasets. This dual-mode capability requires sophisticated data pipeline architectures that can optimise for both latency and throughput requirements.

Data integration must also address data quality and governance requirements, ensuring that AI assistants access accurate, up-to-date information while maintaining compliance with data lineage, privacy, and security policies. This requires integration with existing data governance platforms and implementation of automated data quality monitoring capabilities.

Custom GPT Development and Implementation

Custom GPTs represent a powerful approach for creating specialised AI assistants that understand organisational context, terminology, and business processes. For CDOs, custom GPTs offer the opportunity to build AI assistants that can provide sophisticated analytical capabilities while maintaining alignment with organisational data standards and governance requirements.

Design and Development Frameworks

Creating effective custom GPTs requires systematic approaches to design, development, and deployment that ensure these systems meet enterprise requirements while providing valuable capabilities to business users. The development process must balance technical sophistication with user accessibility and organisational alignment.

Knowledge Base Development and Curation forms the foundation of effective custom GPTs, requiring careful selection and organisation of information that enables the AI assistant to provide accurate, relevant, and contextually appropriate responses. For data organisations, this knowledge base must include technical documentation, business definitions, analytical methodologies, and organisational policies.

The knowledge base development process should include systematic review and validation of all included information to ensure accuracy and consistency. This review process must involve subject matter experts from across the organisation who can validate technical accuracy and business relevance of the information included in the knowledge base.

Ongoing knowledge base maintenance is crucial for ensuring that custom GPTs remain current and accurate as business requirements, data sources, and analytical methodologies evolve. This maintenance requires established processes for updating information, validating changes, and ensuring that updates don’t introduce inconsistencies or errors.

Training and Fine-Tuning Strategies must be designed to optimise custom GPT performance for specific organisational use cases and requirements. This optimisation requires understanding both the technical capabilities of the underlying AI models and the specific needs and preferences of the intended user population.

Fine-tuning processes should include comprehensive testing with representative use cases and user scenarios to ensure that the custom GPT provides accurate, helpful, and contextually appropriate responses. This testing should include both technical validation of analytical capabilities and user experience evaluation to ensure that the system meets usability requirements.

The training process must also address potential biases and limitations in the underlying AI models, implementing safeguards and validation procedures that ensure fair and accurate responses across different user groups and use cases. This is particularly important for data applications where biased or inaccurate responses could lead to poor business decisions.

Integration with Enterprise Systems requires sophisticated approaches to connecting custom GPTs with existing data infrastructure, analytical tools, and business applications. These integrations must maintain security and governance standards while providing smooth user experiences that don’t require technical expertise.

API development and management becomes crucial for enabling custom GPTs to access enterprise data sources and analytical capabilities. These APIs must provide secure, efficient access to data while implementing appropriate access controls and audit capabilities that align with enterprise governance requirements.

The integration architecture must also support workflow automation capabilities that enable custom GPTs to trigger business processes, generate reports, or initiate analytical tasks based on user requests. These automation capabilities can significantly enhance the value of AI assistants by enabling them to take action based on insights rather than simply providing information.

Data Operations Automation and Intelligence

AI assistants offer significant opportunities to automate routine data operations while providing intelligent insights that improve the efficiency and effectiveness of data management processes. For CDOs, these automation capabilities can free data professionals to focus on strategic initiatives while ensuring that operational tasks are completed consistently and accurately.

Automated Data Quality Management

Data quality represents one of the most time-consuming and critical aspects of data operations, requiring continuous monitoring, validation, and remediation activities. AI assistants can automate many of these tasks while providing intelligent insights that help data teams identify and address quality issues more effectively.

Intelligent Data Profiling and Anomaly Detection capabilities enable AI assistants to continuously monitor data sources for quality issues, inconsistencies, and anomalies that could impact analytical accuracy or business operations. These capabilities can identify issues much faster than manual processes while providing detailed insights about the nature and potential impact of detected problems.

The anomaly detection algorithms must be trained on historical data patterns and business context to minimise false positives while ensuring that genuine issues are identified quickly. This training requires collaboration between data scientists and business stakeholders to define appropriate thresholds and validation criteria for different types of data and use cases.

AI assistants can also provide intelligent recommendations for addressing detected data quality issues, suggesting specific remediation actions based on the type of issue, its potential impact, and available remediation options. These recommendations can significantly reduce the time required to address data quality problems while ensuring that remediation actions are appropriate and effective.

Automated Data Lineage and Impact Analysis capabilities enable AI assistants to track data flows throughout the enterprise and provide intelligent insights about the potential impact of changes to data sources, processes, or systems. This capability is crucial for maintaining data governance and ensuring that changes don’t have unintended consequences.

The lineage tracking system must integrate with existing data infrastructure to provide comprehensive visibility into data flows, transformations, and dependencies. This integration requires sophisticated metadata management capabilities that can capture and maintain accurate information about data relationships and dependencies.

AI assistants can use lineage information to provide intelligent impact analysis when changes are proposed to data sources or processes. This analysis can identify all downstream systems and processes that could be affected by proposed changes, enabling data teams to plan and execute changes more effectively while minimising disruption to business operations.

Intelligent Reporting and Analytics Automation

Traditional reporting and analytics processes often require significant manual effort to collect data, perform analysis, and generate insights. AI assistants can automate many of these tasks while providing intelligent capabilities that enhance the quality and relevance of generated reports and analyses.

Automated Report Generation and Distribution capabilities enable AI assistants to generate routine reports automatically based on predefined templates, data sources, and distribution schedules. These capabilities can significantly reduce the manual effort required for routine reporting while ensuring that reports are generated consistently and accurately.

The report generation process must include intelligent data validation and quality checking to ensure that generated reports are accurate and complete. This validation should include checks for data completeness, consistency, and accuracy, with automatic alerts when issues are detected that could affect report quality.

AI assistants can also provide intelligent customisation capabilities that tailor reports to specific audiences or use cases based on user preferences, role requirements, or business context. This customisation can improve the relevance and usefulness of generated reports while reducing the manual effort required to create specialised versions.

Predictive Analytics and Forecasting Automation enables AI assistants to automatically generate forecasts and predictive insights based on historical data patterns and business context. These capabilities can provide valuable insights for business planning and decision-making while reducing the specialised expertise required to develop and maintain predictive models.

The predictive analytics capabilities must include appropriate model validation and performance monitoring to ensure that generated forecasts are accurate and reliable. This monitoring should include automatic model retraining when performance degrades and alerts when forecast accuracy falls below acceptable thresholds.

AI assistants can also provide intelligent interpretation of predictive results, explaining the factors driving forecasts and highlighting potential risks or opportunities identified through the analysis. This interpretation capability is crucial for enabling business users to understand and act on predictive insights without requiring specialised analytical expertise.

Security, Governance, and Compliance Frameworks

Enterprise AI assistants must operate within comprehensive security, governance, and compliance frameworks that protect sensitive data while enabling valuable business capabilities. For CDOs, establishing these frameworks is crucial for ensuring that AI assistant implementations meet enterprise standards while providing the flexibility necessary for innovation and growth.

Data Security and Privacy Protection

Protecting sensitive data represents one of the most critical requirements for enterprise AI assistants, requiring comprehensive security measures that address both technical vulnerabilities and operational risks. These security measures must be designed to protect data throughout the entire AI assistant lifecycle, from training and deployment to ongoing operations and maintenance.

Encryption and Access Control Systems must provide comprehensive protection for data at rest, in transit, and in use by AI assistant systems. This protection requires sophisticated encryption technologies that can secure data while maintaining the performance necessary for real-time AI assistant operations.

Access control systems must implement fine-grained permissions that align with organisational data governance policies and regulatory requirements. These systems should support role-based access controls, attribute-based access controls, and dynamic access policies that can adapt to changing business requirements and risk profiles.

The access control framework must also include comprehensive audit capabilities that track all data access and usage by AI assistant systems. These audit trails are essential for compliance reporting, security monitoring, and incident investigation, providing detailed records of how data is accessed and used throughout the organisation.

Data Anonymization and Privacy Preservation techniques must be implemented to protect sensitive personal information while enabling AI assistants to provide valuable analytical capabilities. These techniques must balance privacy protection with analytical utility, ensuring that AI assistants can generate meaningful insights without exposing sensitive information.

Differential privacy and other privacy-preserving technologies can enable AI assistants to work with sensitive data while providing mathematical guarantees about privacy protection. However, implementing these technologies requires careful consideration of privacy-utility trade-offs and may require modifications to analytical approaches and algorithms.

Data masking and tokenization techniques can provide additional privacy protection by replacing sensitive data elements with non-sensitive equivalents that preserve analytical relationships while protecting individual privacy. These techniques must be implemented consistently across all AI assistant capabilities to ensure comprehensive privacy protection.

Regulatory Compliance and Audit Requirements

Enterprise AI assistants must comply with various regulatory requirements that govern data usage, privacy protection, and algorithmic decision-making. These compliance requirements vary by industry and jurisdiction but typically include comprehensive documentation, audit capabilities, and governance processes.

Algorithmic Transparency and Explainability requirements are becoming increasingly important as regulators focus on ensuring that AI systems make fair and transparent decisions. AI assistants must be designed to provide explanations for their recommendations and insights, enabling users to understand how conclusions were reached and validate their appropriateness.

The explainability framework must provide different levels of explanation for different types of users and use cases. Technical users may require detailed information about algorithms and data sources, while business users may need high-level explanations that focus on business logic and decision factors.

Documentation requirements must include comprehensive records of AI assistant development, training, validation, and deployment processes. This documentation is essential for regulatory compliance and audit purposes, providing evidence that AI systems have been developed and operated according to appropriate standards and procedures.

Bias Detection and Fairness Monitoring capabilities must be implemented to ensure that AI assistants provide fair and unbiased recommendations and insights across different user groups and use cases. These capabilities require ongoing monitoring and validation to identify and address potential biases that could lead to unfair or discriminatory outcomes.

The bias detection system must include comprehensive testing across different demographic groups, use cases, and decision scenarios to identify potential sources of bias or unfairness. This testing should be conducted regularly and whenever AI assistant capabilities are modified or updated.

Remediation procedures must be established to address identified biases or fairness issues quickly and effectively. These procedures should include both technical remediation approaches and process changes that can prevent similar issues from occurring in the future.

Implementation Strategies and Best Practices

Successfully implementing enterprise AI assistants requires comprehensive strategies that address technical, organisational, and operational considerations. CDOs must develop implementation approaches that balance the need for rapid value delivery with the requirements for enterprise-grade security, governance, and scalability.

Phased Deployment Approaches

Enterprise AI assistant implementations should follow phased approaches that enable organisations to build capabilities incrementally while managing risks and learning from experience. These phased approaches allow for validation of technical architectures, user adoption strategies, and business value propositions before making larger investments in full-scale deployments.

Pilot Program Development and Execution should focus on specific use cases and user populations that can demonstrate clear business value while providing learning opportunities for broader deployment. Pilot programs should be designed with clear success criteria, defined timelines, and comprehensive measurement frameworks that enable evaluation of both technical performance and business impact.

The pilot program should include representative use cases that span different types of data analysis, user populations, and business functions. This diversity ensures that the pilot provides comprehensive insights about AI assistant capabilities and limitations while identifying potential challenges that must be addressed in broader deployments.

Pilot program execution should include comprehensive monitoring and evaluation capabilities that track both technical performance metrics and user experience indicators. This monitoring provides the data necessary to optimise AI assistant capabilities and identify areas that require improvement before broader deployment.

Scaling and Expansion Strategies must be designed to support growth in both user populations and use case complexity while maintaining performance, security, and governance standards. These strategies should address infrastructure scaling, user onboarding, and capability expansion in coordinated approaches that minimise disruption to existing operations.

Infrastructure scaling must be planned to support anticipated growth in user populations and usage patterns while maintaining cost efficiency and performance standards. This planning should include capacity modeling, performance testing, and cost optimisation strategies that ensure sustainable growth.

User onboarding strategies must be designed to support rapid adoption while ensuring that new users receive appropriate training and support. These strategies should include self-service onboarding capabilities, comprehensive documentation, and support resources that enable users to become productive quickly.

Change Management and User Adoption

Successful AI assistant implementations require comprehensive change management strategies that address both technical and cultural aspects of organisational transformation. These strategies must build user confidence in AI assistant capabilities while addressing concerns about job displacement, data security, and system reliability.

Training and Support Programs must be designed to build user capabilities for working effectively with AI assistants while addressing concerns and resistance that may impede adoption. These programs should include both technical training on AI assistant capabilities and broader education about AI technologies and their business applications.

The training program should be tailored to different user populations and skill levels, providing appropriate depth and focus for different roles and responsibilities. Technical users may require detailed training on advanced capabilities, while business users may need practical guidance on using AI assistants for common tasks.

Ongoing support capabilities must be established to help users resolve issues, learn new capabilities, and optimise their use of AI assistant technologies. These support capabilities should include both self-service resources and human support options that can address complex issues and provide personalised guidance.

Performance Monitoring and Optimisation frameworks must be implemented to ensure that AI assistant implementations continue to meet user needs and business requirements as they scale and evolve. These frameworks should track both technical performance metrics and business impact indicators to provide comprehensive insights about system effectiveness.

User feedback collection and analysis capabilities are essential for identifying opportunities to improve AI assistant capabilities and user experiences. This feedback should be collected through multiple channels and analysed systematically to identify patterns and trends that inform optimisation efforts.

Continuous improvement processes should be established to implement optimisations and enhancements based on performance monitoring and user feedback. These processes should include regular review cycles, prioritisation frameworks, and implementation procedures that ensure continuous evolution of AI assistant capabilities.

Measuring Success and ROI

Demonstrating the value of enterprise AI assistant investments requires comprehensive measurement frameworks that capture both quantitative benefits and qualitative improvements in organisational capabilities. CDOs must establish metrics and measurement approaches that align with business objectives while providing insights for ongoing optimisation and improvement.

Key Performance Indicators and Metrics

Measuring AI assistant success requires balanced scorecards that include technical performance metrics, user adoption indicators, and business impact measures. These metrics must be tracked consistently over time to identify trends, validate investment decisions, and guide optimisation efforts.

Technical Performance Metrics should track system reliability, response times, accuracy, and scalability to ensure that AI assistants meet enterprise performance standards. These metrics provide the foundation for user satisfaction and business value creation, as poor technical performance can undermine user confidence and limit adoption.

System availability and reliability metrics should track uptime, error rates, and performance consistency across different usage patterns and user populations. These metrics are crucial for enterprise applications that support mission-critical business processes and decision-making activities.

Response time and throughput metrics should measure how quickly AI assistants can process different types of requests and handle varying load patterns. These metrics are important for user experience and can significantly impact adoption rates and user satisfaction.

User Adoption and Engagement Metrics should track how effectively AI assistants are being adopted across the organisation and how actively they are being used for different types of tasks. These metrics provide insights about user satisfaction, capability utilisation, and potential areas for improvement.

Active user counts and usage frequency metrics should track how many users are regularly engaging with AI assistants and how often they use these capabilities. These metrics provide indicators of user satisfaction and system value while identifying user populations that may need additional support or training.

Feature utilisation metrics should track which AI assistant capabilities are being used most frequently and which may be underutilized. This information can guide training programs, user interface improvements, and capability development priorities.

Business Impact and Value Creation Measurement

Demonstrating business value from AI assistant investments requires measurement approaches that connect system usage to business outcomes and value creation. These measurements must account for both direct benefits and indirect value creation that may be difficult to quantify precisely.

Productivity and Efficiency Improvements should measure how AI assistants reduce the time and effort required for data analysis, reporting, and decision-making activities. These improvements can be measured through time studies, process analysis, and user surveys that capture changes in work patterns and productivity.

Time-to-insight metrics should track how quickly users can obtain answers to business questions and generate analytical insights using AI assistants compared to traditional approaches. These metrics are particularly important for demonstrating the value of self-service analytics capabilities.

Process automation benefits should measure the reduction in manual effort required for routine data operations and reporting activities. These benefits can be quantified through process analysis and resource utilisation tracking that compares pre- and post-implementation operational requirements.

Decision Quality and Business Outcome Improvements represent more complex but potentially more valuable benefits of AI assistant implementations. These improvements may include better decision-making, improved business outcomes, and enhanced competitive positioning that result from improved access to data and analytical insights.

Decision speed improvements should track how AI assistants enable faster decision-making by providing rapid access to relevant data and analytical insights. These improvements can be measured through decision cycle time analysis and stakeholder feedback about decision-making processes.

Business outcome improvements may include revenue growth, cost reductions, risk mitigation, and customer satisfaction improvements that result from better data-driven decision-making enabled by AI assistants. These outcomes may require longer-term tracking and sophisticated attribution analysis to connect AI assistant usage to business results.

Frequently Asked Questions

Q: How do we ensure enterprise AI assistants maintain data security while providing broad access to insights?

A: Implement comprehensive security frameworks that include encryption, fine-grained access controls, and audit capabilities. Use data anonymization and privacy-preserving techniques where appropriate. Establish clear governance policies that define what data can be accessed by different user groups and implement technical controls that enforce these policies automatically.

Q: What’s the difference between custom GPTs and commercial enterprise AI assistant platforms?

A: Custom GPTs offer greater customisation and control over the AI assistant experience but require more development effort and technical expertise. Commercial platforms provide faster implementation and professional support but may offer less customisation flexibility. Consider your organisation’s technical capabilities, customisation requirements, and resource constraints when making this decision.

Q: How do we measure ROI for AI assistant implementations when benefits are often intangible?

A: Use balanced measurement approaches that include both quantitative metrics (time savings, cost reductions, productivity improvements) and qualitative indicators (user satisfaction, decision quality, innovation capabilities). Track leading indicators such as user adoption and engagement alongside lagging indicators such as business outcomes. Consider long-term value creation rather than focusing solely on short-term returns.

Q: What are the key considerations for integrating AI assistants with existing data infrastructure?

A: Focus on API design and data access patterns that can scale with usage growth. Ensure integration architectures support both real-time and batch processing requirements. Implement comprehensive data governance and quality controls. Plan for data lineage tracking and impact analysis capabilities. Consider performance optimisation and caching strategies for frequently accessed data.

Q: How do we address user concerns about AI assistants replacing human analysts?

A: Position AI assistants as augmenting rather than replacing human capabilities. Demonstrate how AI assistants can handle routine tasks while freeing analysts to focus on higher-value strategic work. Provide comprehensive training that helps analysts develop new skills for working with AI tools. Show concrete examples of how AI assistants enhance rather than threaten job roles.

Enterprise AI assistants represent a significant opportunity for Chief Data Officers to reshape how their organisations interact with data, generate insights, and make decisions. The technology offers the potential to democratize data access while maintaining enterprise-grade security and governance standards, enabling organisations to scale their analytical capabilities without proportional increases in specialised personnel.

Success with enterprise AI assistants requires comprehensive approaches that address technical architecture, governance frameworks, and organisational change management simultaneously. CDOs must balance the need for rapid value delivery with the requirements for enterprise-grade security, scalability, and compliance while building organisational capabilities that support sustained innovation and growth.

The frameworks and strategies outlined in this guide provide practical approaches for implementing enterprise AI assistants that deliver measurable business value while meeting enterprise requirements. Organisations that successfully implement these capabilities will be positioned to achieve sustainable competitive advantages through improved decision-making, enhanced operational efficiency, and accelerated innovation.

The AI assistant landscape continues to evolve rapidly, with new capabilities and technologies emerging regularly that expand the potential applications and value creation opportunities. CDOs who establish solid foundations for AI assistant implementation today will be well-positioned to use these innovations and continue expanding their organisations’ analytical capabilities as the technology matures.

The investment in enterprise AI assistants is substantial, but the potential returns justify the effort for organisations committed to data-driven transformation. By following the principles and practices outlined in this guide, CDOs can build AI assistant capabilities that not only meet current business needs but also provide the foundation for future innovation and growth in an increasingly data-driven business environment.

References:

[1] Gartner. (2025). “Enterprise AI Assistants: Market Analysis and Implementation Guide.” https://www.gartner.com/en/documents/4015321/enterprise-ai-assistants-market-analysis-implementation