Innovate UK BridgeAI in 2026: eligibility and rejections

What is the BridgeAI programme?

BridgeAI is a UK Research and Innovation (UKRI) programme delivered by Innovate UK, designed to help sectors with lower AI adoption levels apply AI to commercial and operational problems. It was launched as part of UKRI's Technology Missions Fund and sits alongside adjacent Innovate UK programmes rather than replacing them. Delivery partners publicly named by UKRI include the Alan Turing Institute, Digital Catapult, the Hartree Centre, and BSI, each contributing expertise across research, translation, compute, and standards. At the time of writing, the authoritative source for live calls, sector scope, and application guidance is the current BridgeAI call page on the Innovate UK Funding Service.

The programme's practical purpose is translation rather than research. BridgeAI funds UK businesses that already have a commercial case for AI and need support to get a working system into production. It is not the right programme for blue-sky research (look at UKRI responsive-mode grants) or for very early-stage ideation (look at Innovate UK Smart Grants). A 2024 UKRI communication described BridgeAI as targeting sectors including agriculture, construction, creative industries, and transport and logistics, with the list refreshed in subsequent calls. Check the current call page for the in-scope sectors before committing to an application.

Who is eligible?

Eligibility rules for UKRI-managed programmes typically require a UK-registered business, a lead applicant based in the UK, and a project to be carried out primarily in the UK. BridgeAI also typically requires collaboration in some form, either with a delivery partner, an academic institution, or another business. The precise eligibility criteria for each call vary, and the authoritative text is always the competition brief published on the Innovate UK Funding Service.

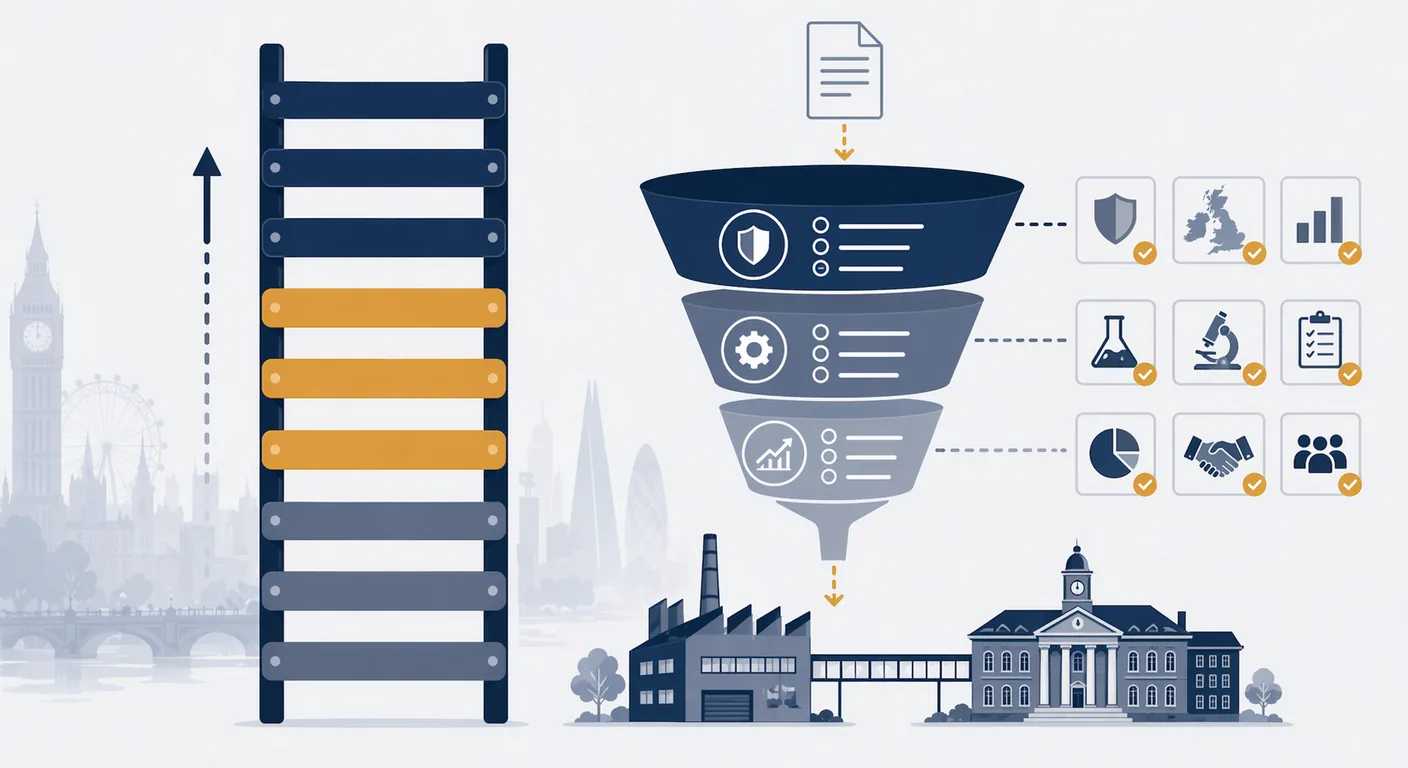

Three points are worth checking carefully before investing time in a bid. First, business size and type: some calls are open to SMEs only, others to large enterprises in lead or partner roles, and definitions follow the standard Companies Act thresholds. Second, the project's sector fit: BridgeAI is sector-targeted and an otherwise strong proposal from a firm outside the named sectors will not be eligible. Third, the applicant's funding history: organisations in financial difficulty, with unresolved state aid issues, or already at the UKRI cumulative funding threshold will be filtered out at application triage. Our AI readiness framework is a useful pre-bid checkpoint on the internal capacity side.

TRL expectations: why BridgeAI favours TRL 4 to 6

Technology Readiness Levels (TRL) are the 1 to 9 scale used by UKRI and other funders to describe how close a technology is to deployment. Level 1 is basic principles observed; level 9 is a proven system in production at scale. BridgeAI's translation focus means it is most relevant to proposals in the TRL 4 to 6 band: a component validated in a lab environment (TRL 4), a component validated in a relevant environment (TRL 5), or a prototype demonstrated in a relevant environment (TRL 6).

This matters for framing. A TRL 2 proposal ("we have an idea and some papers") is too early. A TRL 8 proposal ("we have a production system deployed to 200 customers") is too late and will be told to apply for scale-up or export support instead. The sweet spot is a working prototype with a named industry partner, a clear integration target, and a defined outcome that would be commercially useful if achieved. Frame the project within that band explicitly in the first page of the application, not buried in an appendix.

Match-funding patterns and typical award sizes

Match-funding and intervention rates on UKRI-managed grants follow the Subsidy Control framework and differ by organisation size. For SMEs, industrial research typically attracts up to 70% grant intervention; experimental development typically up to 45%. Large businesses are usually capped lower. The specific rates for each BridgeAI call are published in the competition brief, and the figures below are indicative of the Innovate UK pattern generally rather than a guarantee for any particular call. Check the live call page for the current numbers.

Award sizes on BridgeAI calls have ranged widely depending on the strand: some calls have funded shorter feasibility projects in the low-five-figure to low-six-figure range, others have funded longer collaborative projects into the mid-six-figure or low-seven-figure range. Do not assume a figure; read the call.

Decision timelines

Innovate UK competitions typically run on the following cadence: call opens, call closes (usually 6 to 10 weeks later), assessment (4 to 8 weeks), notification of outcome, contracting. From submission to contract is commonly three to five months in our experience supporting UK SME applicants, which is material for cash-flow planning. Build the expected timeline into the business case from the outset, and do not count on an earlier start date than the grant offer confirms. The Innovate UK Funding Service publishes the indicative timeline for every call on the call page; that is the number to plan against.

Five reasons BridgeAI applications get rejected

The reasons innovation grant applications fail are consistent and fixable. These five appear in almost every rejected BridgeAI-style application we review after the fact.

- The commercial case is thin. Assessors are looking for a credible route to a return on the public investment. A proposal that describes the technology in depth but glosses over market size, pricing, route to customers, and competitive positioning reads as a research paper with a funding ask attached. The commercial section deserves the same rigour as the technical section.

- The TRL claim is not evidenced. Saying a system is at TRL 5 does not make it so. Assessors look for specific evidence of validation in a relevant environment: named partner, named data, named outcome measured. A vague claim defaults to a low score.

- The consortium is imbalanced. A lead business with no industrial partner in the target sector, or a consortium dominated by academic partners with no route to market, both trigger scepticism. BridgeAI expects a credible pathway from the project to commercial use, which usually means a named industrial partner with both the problem and the buying authority.

- The ethics, governance, and data protection sections are superficial. For an AI project handling UK data, UK GDPR, Equality Act 2010 considerations, and any sector-specific requirements need to be addressed properly. A single paragraph that says "we will comply with GDPR" is not enough. Our EU AI Act guide for UK SMEs covers the wider regulatory landscape that assessors increasingly expect applicants to reference.

- The budget is not credible. Either over-stated (day rates that do not match the cited partner's published figures, equipment lines that look padded) or under-stated (a 12-month project costed at three developer days per week, implying staff will be stretched across other work). Assessors have calibrated eyes; a budget that reads as guesswork is a red flag across the whole proposal.

Adjacent UK funding routes

BridgeAI is one of several UK funding routes for AI-adjacent projects. The table below summarises when each is usually the right fit. Always verify current scope on the relevant programme page before committing to an application.

| Route | Indicative scope | Typical fit |

|---|---|---|

| BridgeAI | Sector-targeted AI adoption at TRL 4 to 6 | Translation projects in the programme's named sectors |

| Innovate UK Smart Grants | Disruptive, market-led R&D across any sector | Earlier-stage or broader proposals that do not fit a themed call |

| Knowledge Transfer Partnerships (KTPs) | Business + academic partner + graduate associate, 12 to 36 months | Longer-term capability building with university support |

| Made Smarter Programme | SME manufacturers in specific English regions | Manufacturing adoption projects with regional support |

| R&D Tax Credits (HMRC) | Tax relief on qualifying R&D expenditure | Post-hoc support for work already undertaken |

Most UK SMEs considering BridgeAI should also check eligibility for Smart Grants and R&D Tax Credits. The three are complementary rather than mutually exclusive, and a well-designed project usually uses more than one.

Working with BridgeAI delivery partners

The delivery partners named by UKRI for BridgeAI bring materially different things to a proposal. The Alan Turing Institute contributes research expertise and, in some calls, a direct academic-industrial bridge. Digital Catapult contributes applied translation capability and a commercial-leaning network across adopter sectors. The Hartree Centre contributes compute access and AI engineering support for projects that need scale beyond typical cloud budgets. BSI contributes standards and governance expertise, which matters increasingly as UK regulators pay more attention to AI assurance.

Choosing the right partner (or partners) is a function of what the proposal actually needs. A project whose risk is model quality in a regulated setting benefits from a Turing-style partnership. A project whose risk is commercial traction benefits from Digital Catapult's adopter-network reach. A project whose risk is compute or AI engineering capacity benefits from Hartree. Involving a partner solely to improve the look of a consortium is usually visible to assessors and tends to hurt rather than help. Partners should be chosen for the risk they actually reduce.

Preparing evidence that lifts an application's technical score

Assessor scores on Innovate UK competitions typically weight four areas: business need and market, innovation and technical approach, delivery plan and team, and value for money. Specific evidence moves scores in each area more reliably than polished prose does. Three evidence types have disproportionate impact on technical scores in particular.

First, a named data set and a described access path. An application that states "we will use a dataset representative of the target environment" scores lower than one that states "we have signed a data-sharing agreement with [partner], giving us access to 36 months of anonymised records described in Annex B." Assessors read for concreteness, and a data-access story is a common gap.

Second, a baseline the project will improve against. Without a baseline number, "improvement" has no meaning. A credible bid specifies the current state (accuracy, cycle time, cost per case), the expected end state, and the method of measurement. Assessors do not require the project to be sure it will hit the end state; they do require the target to be specified in a way that can be verified.

Third, a risk register that treats the two or three most plausible failure modes seriously. Generic risk registers ("risk: schedule slippage; mitigation: good project management") score low. A strong register names the specific technical or commercial risks for this project, quantifies their likelihood and impact in the applicant's judgement, and describes the contingency in enough detail that an assessor can form their own view.

What a strong BridgeAI bid looks like

Three characteristics are common to the applications that succeed. First, a specific, measurable problem statement owned by an industrial partner, not a general capability aspiration. Second, a credible delivery plan where the technical work plan and the commercial exploitation plan are coherent with each other, and each can stand up to independent scrutiny. Third, a team that demonstrates it can deliver the plan: named people, named track records, and a realistic time allocation that matches the budget. For broader context on framing AI investments for UK business stakeholders, see our guide to AI strategy and the Knowledge Hub strategy section.

Where to start

If you are early in the process, begin by reading the most recent BridgeAI call brief on the Innovate UK Funding Service in full, then map your project against the eligibility criteria and the TRL bands. Speak to a delivery partner (Alan Turing Institute, Digital Catapult, Hartree Centre) if the call route supports it, and treat the commercial exploitation plan as equal in weight to the technical plan. For support with framing an AI project for grant-readiness and assessor review, see our AI strategy service, our AI readiness service, and our financial services industry page if the application sits in a regulated sector.

Frequently asked questions

- Who is eligible for BridgeAI funding?

- Eligibility is set by the individual competition brief on the Innovate UK Funding Service, but the usual pattern is: a UK-registered organisation leading the application, the majority of the work carried out in the UK, and a named sector fit for the specific call. Some calls are SME-only; others accept large businesses in specific roles. Always read the live call page; historical eligibility does not carry over automatically.

- What level of match funding should I expect?

- Intervention rates on UKRI-managed grants follow the Subsidy Control framework and depend on organisation size and activity type. For SMEs, industrial research has commonly attracted up to 70% grant intervention, with experimental development up to 45%. The rate for any specific BridgeAI call is published in the competition brief, so treat any general figure as indicative and confirm against the live call.

- What TRL does BridgeAI fund?

- The programme is most relevant at TRL 4 to 6: a component validated in a lab, a component validated in a relevant environment, or a prototype demonstrated in a relevant environment. Earlier-stage work fits UKRI responsive-mode grants or Innovate UK Smart Grants better; later-stage work fits scale-up or export programmes. A strong application evidences the TRL claim with a named partner, named data, and a named outcome measured.

- How long does a BridgeAI decision take?

- The Innovate UK cadence is typically: call opens, runs for 6 to 10 weeks, assessment takes 4 to 8 weeks, and contracting follows. From submission to contract is commonly three to five months in our experience. The indicative timeline for each call is published on the Innovate UK Funding Service; plan against that, not a generic average, and do not assume a project start earlier than the grant offer confirms.

- What are the most common reasons BridgeAI applications get rejected?

- Five repeated reasons: a thin commercial case treated as an afterthought to the technical section; an unevidenced TRL claim; a consortium missing a credible industrial partner in the target sector; superficial ethics, governance, and data protection sections that do not address UK GDPR, Equality Act, or sector-specific regulation properly; and a budget that does not match the delivery plan. All five are fixable in the pre-submission review.

- What are the alternatives if my project is not a BridgeAI fit?

- Innovate UK Smart Grants for earlier-stage or broader proposals; Knowledge Transfer Partnerships if you have a university partner and a 12 to 36 month programme; Made Smarter for SME manufacturers in specific English regions; and R&D Tax Credits from HMRC for work already underway. These are complementary rather than mutually exclusive, and a well-designed project often uses more than one. A pre-bid gap analysis against all four is a sensible first step.