Enterprise LLM selection for UK businesses in 2026

What "enterprise LLM" means in 2026

An enterprise LLM is a large language model offered under commercial terms designed for business use: a signed data processing agreement, a no-training guarantee by default, role-based administrative controls, audit logging, regional data processing options, and either single sign-on integration or an API surface that supports it. The term is useful because it excludes consumer tiers (free ChatGPT, free Claude.ai, free Gemini) which share a model family with the enterprise offers but lack the contractual and administrative surface UK businesses need. A UK business evaluating an LLM in 2026 is choosing between enterprise offers, not between consumer products.

Four providers have substantive enterprise LLM offerings relevant to most UK businesses: Anthropic (Claude Opus and Sonnet, via direct API, AWS Bedrock, Google Vertex), OpenAI (GPT-series via API, Azure OpenAI for Microsoft customers), Google (Gemini Pro via Vertex AI), and Mistral (Mistral Large via their API and Azure). Other credible options exist, particularly for open-weights self-hosting, but these four cover the majority of enterprise procurement conversations we see in the UK in April 2026.

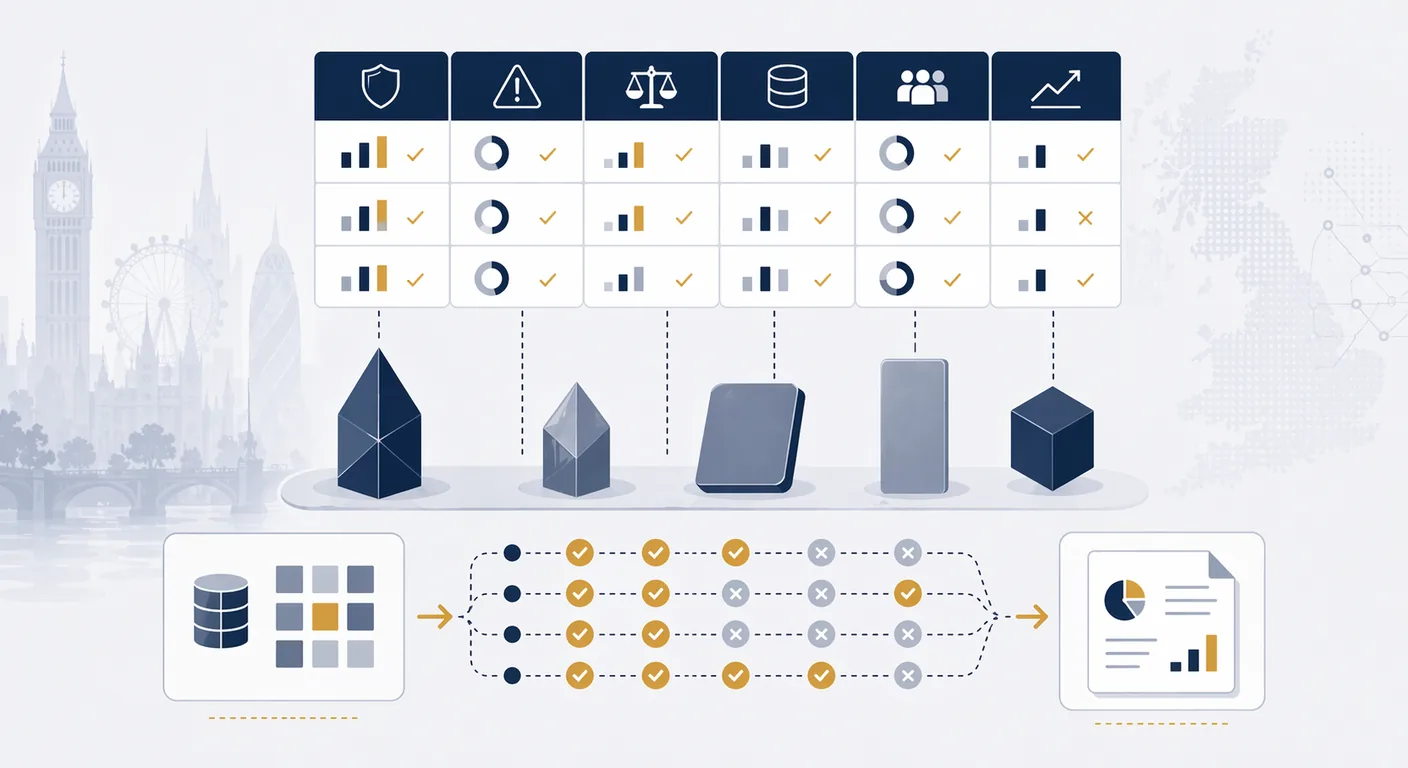

A 12-criterion scoring framework

The framework below is designed to be scored out of 10 per criterion, with weightings set by your own context. It is deliberately criterion-heavy; a three-criterion "price, quality, speed" framework will not surface the questions that actually matter for an enterprise deployment. For a companion view on choosing between the two most common providers specifically, see our Claude vs ChatGPT for UK SMEs comparison.

- Data residency. Where does the model run, where is data processed, and what regions are guaranteed in the contract? UK or EU residency matters for UK GDPR posture; a provider that can only process in the US adds an international transfer burden.

- Single sign-on and SCIM. Does the provider support enterprise SSO (SAML, OIDC) and automated provisioning (SCIM)? Without these, user lifecycle management is manual and account sprawl becomes an audit finding.

- DPA terms. Is the data processing agreement balanced, with clear data controller/processor roles, no-training by default, and clean UK or EU international transfer mechanisms? Are sub-processors disclosed and updated?

- Audit logs. What is logged, for how long, and how is it accessible? Per-user prompt and response logs, API call logs, admin changes, and policy violations are the minimum. Logs should be exportable to your SIEM.

- Safety evaluations. What published evaluations has the provider run on the model, in which languages and domains, and how have the results moved across model generations?

- Regional pricing and billing. Can the provider bill in GBP, issue VAT-compliant invoices, and support UK entity purchasing? Surprisingly, this still trips up enterprise procurement in 2026.

- Latency and throughput. What is the typical time-to-first-token and tokens-per-second in the region you will operate in? Cross-region latency to US endpoints can add 300 to 800 ms per request, which matters for agents and voice use cases.

- Context window. What context length is supported, how is it priced, and how does the model behave as context fills? Claim and reality diverge here; test under load.

- Function calling and tool use. Does the model support structured function calling, parallel tool calls, and JSON mode reliably? For agentic uses, this is the single biggest differentiator between models on paper versus in production.

- Fine-tuning options. Does the provider offer fine-tuning, and if so, what is the data handling posture? Some vendors fine-tune in-region with strict isolation; others do not offer it on enterprise plans at all.

- Retention controls. Can retention be set to zero for prompts and completions? What is the default? Zero-retention options are standard on enterprise tiers from the major providers but need to be explicitly configured.

- Independent benchmark scores. How does the model perform on independent benchmarks relevant to your use case: MMLU, GPQA, HumanEval, SWE-bench, and domain-specific suites? Treat vendor-published benchmarks as one input among several, not as the whole picture.

Comparison table across leading enterprise LLMs

The table below summarises the five models most commonly shortlisted by UK businesses in April 2026. Refresh this table quarterly: vendor prices, residency options, and capabilities change on a short cadence, and the details below are a snapshot intended as a starting point for a bake-off, not a final selection.

| Model | UK / EU residency | Strengths | Watch items |

|---|---|---|---|

| Claude Opus 4.6 | EU region via direct API and Bedrock; AWS EU regions | Strong reasoning, reliable tool use, careful safety posture, long context | Higher per-token cost; UK-specific residency via EU region |

| Claude Sonnet 4.6 | EU region via direct API and Bedrock | Strong quality-to-cost ratio; most common workhorse choice for UK SME deployments | Lower ceiling than Opus on hardest reasoning tasks |

| GPT-series (latest, via Azure OpenAI) | Azure UK South and UK West available | Deep ecosystem, mature tooling, strong function calling | Rapid release cadence means behaviour changes frequently; evaluate on the specific snapshot |

| Gemini 2.x Pro (via Vertex AI) | EU regions on Vertex AI | Very long context, native multimodal, competitive pricing | Tool-use reliability improving but historically behind peers; test for your use case |

| Mistral Large (via Mistral API and Azure) | EU regions available | European provenance, competitive cost, strong on European languages | Smaller ecosystem of enterprise integrations; less deep governance documentation than the US providers |

The residency columns reflect options as listed on each provider's trust or pricing documentation in April 2026. Anthropic's trust centre publishes the EU data residency option; OpenAI and Google publish region-specific pricing and residency on their Azure OpenAI and Vertex AI documentation respectively. Always confirm residency on the current documentation before committing, because this is a fast-moving area.

How to run a bake-off

A bake-off is a structured comparison between two or three shortlisted models on your actual tasks. It is the step most UK businesses skip, and the decision quality suffers when they do. A defensible bake-off has five components.

- A held-out task set. 50 to 150 prompts drawn from real work, with agreed correct outputs or acceptability criteria. The set stays fixed across all providers to allow a like-for-like comparison.

- A scoring rubric. Quality dimensions defined in advance: factual correctness, format adherence, tone, handling of edge cases, willingness to say "I don't know." Score blinded where possible: remove provider identifiers from responses before the reviewer scores them.

- Latency measurement under realistic conditions. Time to first token and end-to-end completion time, measured from your infrastructure in your region, at a load resembling your expected production traffic.

- Cost-per-task calculation. Token counts and list price give a per-task cost; sum across the task set for the bake-off total. Include retrieved context in the input token count if your production flow uses retrieval.

- Operational factors. Onboarding time, sandbox availability, billing fit, and the provider's responsiveness during the evaluation. These often outweigh model quality differences for shortlisted candidates that score within a point or two of each other.

A useful bake-off finishes in two to three weeks with a written report shared to the procurement stakeholders. A bake-off that stretches beyond a month typically means the scope was not tight enough or the task set was not defined clearly up front.

When to fine-tune (and when not to)

Fine-tuning an enterprise LLM on proprietary data is right in three situations: when you need a specific output format the base model does not produce reliably, when you need a tone or style the base model cannot prompt into, or when you need lower latency or cost and have demonstrated that a smaller fine-tuned model matches the base model's quality on your task. Fine-tuning is usually wrong for improving factual knowledge; retrieval-augmented generation is cheaper, updateable, and more auditable for that purpose. Our RAG for UK SMEs guide covers the retrieval path in more detail.

Two practical cautions. Fine-tuning data becomes part of the risk surface: the fine-tuning dataset must be handled with the same care as production data, including UK GDPR compliance, access controls, and retention rules. And fine-tuned models often lag the base model's capabilities across the next one or two releases; a model fine-tuned on Sonnet 4.5 does not inherit Sonnet 4.6's improvements. Plan for periodic re-tuning or for a path back to base models if the capability gap grows.

Governance documentation buyers will ask for

Enterprise procurement processes in the UK increasingly ask a consistent set of governance questions before contracting. Assembling the documentation up front shortens the sales cycle materially and avoids the pattern where a chosen model is later blocked by legal or compliance review. Four documents are usually required.

A signed data processing agreement (DPA) with the provider, configured to reflect the controller-processor roles for your intended use. Read it against the provider's standard sub-processor list and the stated data retention defaults; confirm that training on your data is disabled by default and cannot be re-enabled without your consent.

A transfer impact assessment if any component of the pipeline sits outside the UK or adequate jurisdictions. This document names the transfer mechanism (UK International Data Transfer Agreement, or the Addendum to the EU Standard Contractual Clauses), records the transfer risk analysis, and notes any supplementary measures applied.

A model-use policy setting out which staff may use which models for which tasks, what data classifications are permitted for each model, and what audit evidence is kept. The policy should be referenced by staff induction and refreshed when a new model is added to the approved list.

A bake-off record: the held-out task set, the scoring rubric, the results, the operational observations, and the rationale for the final selection. This document is disproportionately valuable at year two when the decision is revisited or when a new model release forces a re-evaluation. Without it, the business typically re-runs the bake-off badly and without the original context.

Hybrid model strategies

Most mature UK enterprise AI deployments in 2026 are not single-model. A typical pattern is a "router" that selects between a higher-capability model for hard tasks and a smaller, cheaper model for routine tasks, with a third specialist model for particular use cases. Hybrid strategies can reduce inference cost by 40% to 70% against a single-model baseline without materially changing user-facing quality, when the routing logic is tuned against your actual task mix.

The trade-off is complexity: two or three models means two or three sets of contract terms, two or three audit surfaces, and more prompt-engineering work to keep behaviour consistent. A hybrid strategy is usually the right choice only after a single-model deployment has proven its value and the cost pressure has become material.

Where to start

A sensible first step is to populate the 12-criterion framework with your organisation's weightings, shortlist two or three candidates, and run a two-week bake-off on a held-out task set. The bake-off report becomes the foundation for procurement approval and for the governance documentation the legal and compliance teams will ask for later. For structured support with shortlisting, bake-off design, and enterprise rollout, see our AI strategy service, our Enterprise AI service, and our AI implementation service. For sector-specific context, our financial services industry page covers the additional procurement and audit expectations in regulated environments. For wider background, the Knowledge Hub strategy section collects related guides on AI strategy and governance.

Frequently asked questions

- Which LLM is best for UK businesses in 2026?

- There is no single best LLM; the right choice depends on use case, residency requirements, existing stack, and cost tolerance. For most UK SMEs in April 2026, Claude Sonnet is the common workhorse on quality-to-cost. For Microsoft-heavy stacks, Azure OpenAI is the default. For very long context or multimodal work, Gemini Pro on Vertex AI is competitive. The 12-criterion framework and a two-week bake-off produce a defensible choice faster than vendor benchmarks do.

- How do we keep data in the UK when using an enterprise LLM?

- Choose a provider with an EU or UK region option and configure it explicitly. Claude is available in EU regions via the direct API and via AWS Bedrock. OpenAI is available via Azure in UK South and UK West. Gemini is available in EU regions via Vertex AI. A signed data processing agreement with a no-training clause from every vendor in the pipeline is required, along with a documented international transfer mechanism if any component sits outside adequate jurisdictions. Verify current residency on the provider's trust documentation at the time of contracting.

- How much does an enterprise LLM cost in 2026?

- List prices are usually quoted per million input and output tokens and vary widely by model tier. In April 2026, a typical UK business running 20 staff against a Sonnet-class model spends £400 to £1,500 per month on inference; a heavier workload with longer contexts and tool use can reach £3,000 to £8,000 per month. Frontier models such as Opus or the most capable GPT variants are two to five times more expensive per token, which matters for high-volume workloads. A hybrid routing strategy usually reduces total inference cost by 40% to 70%.

- How do we actually run a bake-off?

- Prepare a held-out task set of 50 to 150 prompts drawn from real work, with agreed correct outputs or acceptability criteria. Score blinded where possible. Measure latency from your infrastructure in your region under realistic load. Calculate cost-per-task at list price. Include operational factors such as onboarding ease, billing fit, and vendor responsiveness. A defensible bake-off finishes in two to three weeks with a written report to procurement stakeholders.

- When should we fine-tune versus use the base model?

- Fine-tune when you need a specific output format the base model does not produce reliably, a tone or style you cannot prompt into, or lower latency and cost that a smaller fine-tuned model can deliver on your task. Do not fine-tune to improve factual knowledge; retrieval-augmented generation is cheaper, updateable, and more auditable. Plan for the fine-tuning dataset to fall under the same governance as production data, and for periodic re-tuning as the base model family evolves.

- Should we standardise on one LLM or use several?

- Most mature UK deployments in 2026 are hybrid. A higher-capability model handles hard tasks, a cheaper model handles routine tasks, and specialist models cover particular use cases. Hybrid deployments often cut total inference cost by 40% to 70% against a single-model baseline. The trade-off is complexity in contracts, audit surface, and prompt engineering. Standardise on one model first, prove the value, and introduce a second model only when cost pressure or a specific capability gap justifies it.