Security Vulnerabilities in AI-Generated Apps — A UK Guide

The evidence base is now substantial

In 2025 and early 2026, enough AI-generated applications have been deployed and independently audited to produce a reliable picture of the security risks. The numbers are not encouraging.

Veracode's 2025 analysis of code generated by more than 150 AI models found that 45% of AI-generated code fails basic security checks. A separate scan of 5,600 vibe-coded applications by Escape.tech identified more than 2,000 vulnerabilities, more than 400 exposed secrets, and 175 instances of exposed personal data. The UK's Institute of Chartered Accountants in England and Wales (ICAEW) published a specific advisory on vibe-coding security risks in February 2026. The category is no longer a theoretical concern.

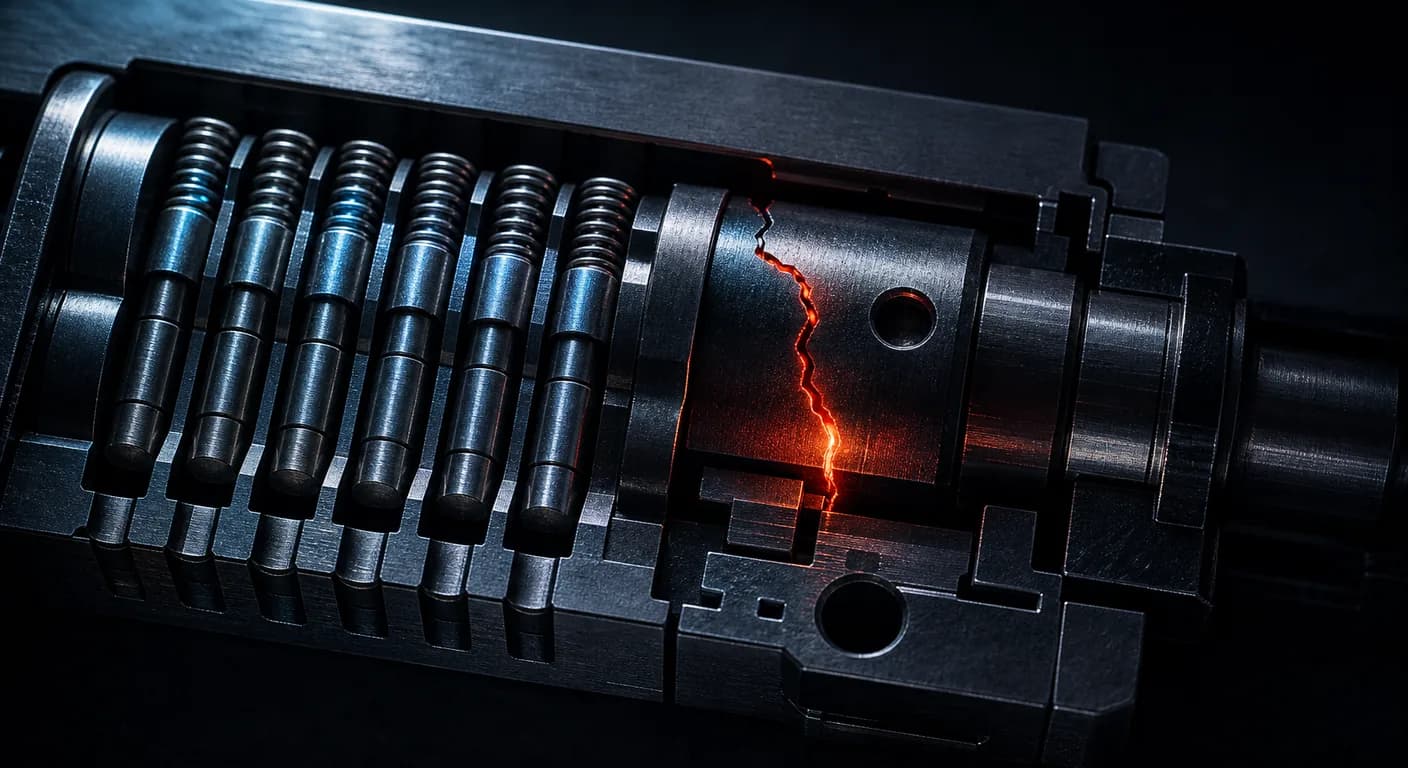

The vulnerability patterns are not random. They cluster around seven predictable areas, each of which arises from the same root cause: AI tools optimise for producing functional code quickly, not for producing secure code. The security gaps they leave are consistent enough that an experienced engineer reviewing an AI-generated codebase for the first time knows which areas to check first.

The seven vulnerability categories

1. Secrets in client-accessible code or public repositories

This is the single most common and immediately damaging vulnerability in AI-generated applications. It manifests in two ways.

The first is secrets in the client-side JavaScript bundle. In a Next.js application, any environment variable prefixed with NEXT_PUBLIC_ is included in the bundle that is sent to the browser. It is accessible to anyone who opens the developer tools. AI tools frequently place API keys in this prefix category either through misunderstanding of the convention or because the tool was prompted to "make the API call work" without considering where the key should live. A Supabase service role key in a NEXT_PUBLIC_ variable provides full database access — bypassing all RLS policies — to anyone who visits the application.

The second is secrets committed to the repository. .env files containing API keys and database credentials are frequently committed to GitHub accidentally — either because the .gitignore was not correctly configured or because the AI tool added them to the tracked files. GitHub's own secret scanning catches some of these, but not all, and a committed secret remains in the git history even after the file is removed.

Immediate action if you find this: Rotate the exposed credential immediately. The secret is compromised from the moment it was exposed, not from the moment you discover it. Do not wait until after remediation to rotate it.

2. Supabase Row Level Security misconfigurations

Supabase's Row Level Security is a database-level access control mechanism. When correctly configured, it ensures that a user can only read and write the rows in the database that belong to them. When misconfigured, it produces one of two failure modes: every authenticated user can access every row in every table (full data exposure), or users cannot access any data at all because the policies are too restrictive.

CVE-2025-48757 documented the first failure mode across more than 170 Lovable-built applications. The root cause was consistent: RLS was either disabled for development speed and never re-enabled, or the policies were written by the AI in a way that appeared correct but included a logic error that made them effectively permissive.

RLS policy review requires a human with knowledge of the intended data access model. The AI cannot reliably self-audit because the correct policy depends on decisions about who should be able to see what, which requires understanding the application's business logic rather than its code.

3. Firebase security rules that allow public read or write access

Firebase's security rules govern who can read from and write to Firestore collections and Firebase Storage. The default rules in a new Firebase project allow public read and write access to all collections — a state appropriate for initial development and catastrophically inappropriate for production. AI tools frequently leave the default rules in place because the application functions correctly with them and the AI is not evaluating security posture, only functionality.

The correct production Firebase security rules are specific to the application's data model and access requirements. A rule that allows any authenticated user to write to any document is nearly as bad as no rules at all for most applications. Rules must be written to enforce the principle of least privilege at the collection and document level.

4. Authentication flows with exploitable gaps

Authentication implementation in AI-generated applications tends to handle the happy path — normal login and registration — correctly, while leaving edge cases exploitable. Common gaps include: no rate limiting on login endpoints (allowing brute-force attacks), email verification that can be bypassed by directly accessing authenticated routes, JWT tokens with no expiry or with excessively long expiry (allowing a stolen token to be used indefinitely), and OAuth state parameter handling that is vulnerable to CSRF attacks.

These gaps are not visible in normal use. They are visible to an attacker who is specifically looking for them, and they are visible in a security-aware code review.

5. Missing input validation and injection exposure

Input validation is the practice of verifying that data submitted by users conforms to the expected format before processing it. AI-generated code frequently handles expected inputs correctly and does not validate unexpected inputs at all. The consequences range from application crashes (unexpected data breaks the processing logic) to SQL injection or NoSQL injection (crafted inputs that manipulate database queries), cross-site scripting (inputs that inject executable JavaScript into the application's output), and path traversal (inputs that navigate the server's filesystem).

In Supabase and Firebase applications, injection attacks at the database query level are less common than in traditional SQL applications because the SDKs provide parameterised queries by default. Input validation at the application layer is still required for business logic integrity and for XSS prevention in any data that the application displays back to users.

6. No rate limiting on API endpoints

API endpoints in AI-generated applications typically have no rate limiting. A user who discovers the API endpoint for user registration can register thousands of accounts in seconds. A user who discovers a paid action endpoint can trigger it repeatedly without paying. A competitor can scrape the application's data at will. Rate limiting is a standard production requirement that AI tools consistently omit because the application functions without it in a development context with a single user.

For applications deployed on Vercel, Cloudflare's rate limiting at the edge is a straightforward implementation. For applications on other platforms, middleware-level rate limiting is typically required. The configuration is not complex, but it is not added automatically and is rarely in the AI-generated code by default.

7. Dependency hallucination and supply chain risk

AI coding tools sometimes suggest npm packages that do not exist, or packages that exist but are not the package the AI described. When a developer installs a package from a hallucinated name, there are three possible outcomes: the installation fails because the package does not exist (the benign case), the installation succeeds because a different package with that name exists and is benign, or the installation succeeds because a package with that name has been deliberately published by an attacker to intercept exactly this kind of hallucination-driven installation. This last case is called "slopsquatting" and is a documented attack vector in AI-assisted development.

The mitigation is auditing the installed dependency list against the intended packages: checking that each dependency is the correct and maintained package, that the version is current, and that the package has not been reported as malicious. This is a one-time audit task, not an ongoing operational requirement.

UK-specific regulatory context

The vulnerabilities described above are security problems in any context. In the UK, several of them carry specific regulatory implications.

Secrets in client-accessible code, permissive database access, and missing input validation affecting personal data are all potential violations of UK GDPR Article 25 (data protection by design and by default) and Article 32 (security of processing). A personal data breach caused by any of these vulnerabilities requires notification to the ICO within 72 hours of the organisation becoming aware of it, and may require notification to affected individuals.

For financial services applications, FCA Operational Resilience rules and the Consumer Duty require that firms have appropriate controls over their technology infrastructure. An AI-built application handling financial data without a security review is an operational risk governance issue as well as a technical one.

For legal applications, SRA guidance on technology use requires that solicitors take appropriate steps to protect client data. An AI-built application handling client communications or matters data without a security review does not meet that standard.

The correct response before launch

A pre-launch security review of an AI-generated application does not require a full penetration test. It requires a senior engineer to review the codebase with the seven vulnerability categories in mind — spending focused time on the areas where AI tools consistently fail.

The AI App Diagnostic Audit includes a security posture review as a core component. The written report rates each finding by severity and provides specific remediation instructions. For applications in regulated sectors, the report includes the applicable UK regulatory context for each security finding.

The alternative — launching without a review and discovering the vulnerability after a user's data is exposed or a breach notification obligation is triggered — carries a remediation cost and a reputational cost that substantially exceeds the cost of the review.

Frequently asked questions

- Does UK GDPR apply to my AI-built app?

- If your application collects, stores, or processes personal data about individuals in the UK, UK GDPR applies from the moment the first real user interacts with it. Personal data includes names, email addresses, usage data, IP addresses, and any other information that can identify an individual — not just sensitive data. There is no minimum scale threshold.

- How do I check if my Supabase RLS is misconfigured?

- In the Supabase dashboard, navigate to Table Editor, then select each table and check the RLS configuration. A table with RLS disabled shows a warning. A table with RLS enabled but with an overly permissive policy (such as a policy that returns true for all authenticated users on both read and write) is the more dangerous misconfiguration and requires reading the policy logic specifically.

- What is the difference between a security audit and a penetration test?

- A security audit is a code review focused on identifying known vulnerability patterns in the codebase. A penetration test is an active attempt to exploit the running application from the outside. Both are valuable; for an AI-generated application preparing to launch, a security-aware code audit is the correct first step. A penetration test is appropriate once the application is live with real users.

- My AI tool told me the code is secure. Should I trust that assessment?

- No. An AI tool assessing its own output for security is not an independent review. The tool does not have a holistic view of the running application in a production environment, does not know the specific data access model the application needs to enforce, and cannot test the application under adversarial conditions. An independent review by a human engineer is the only reliable security assessment for a pre-production application.