The 2026 UK AI compliance checklist for businesses

What is UK AI compliance in 2026?

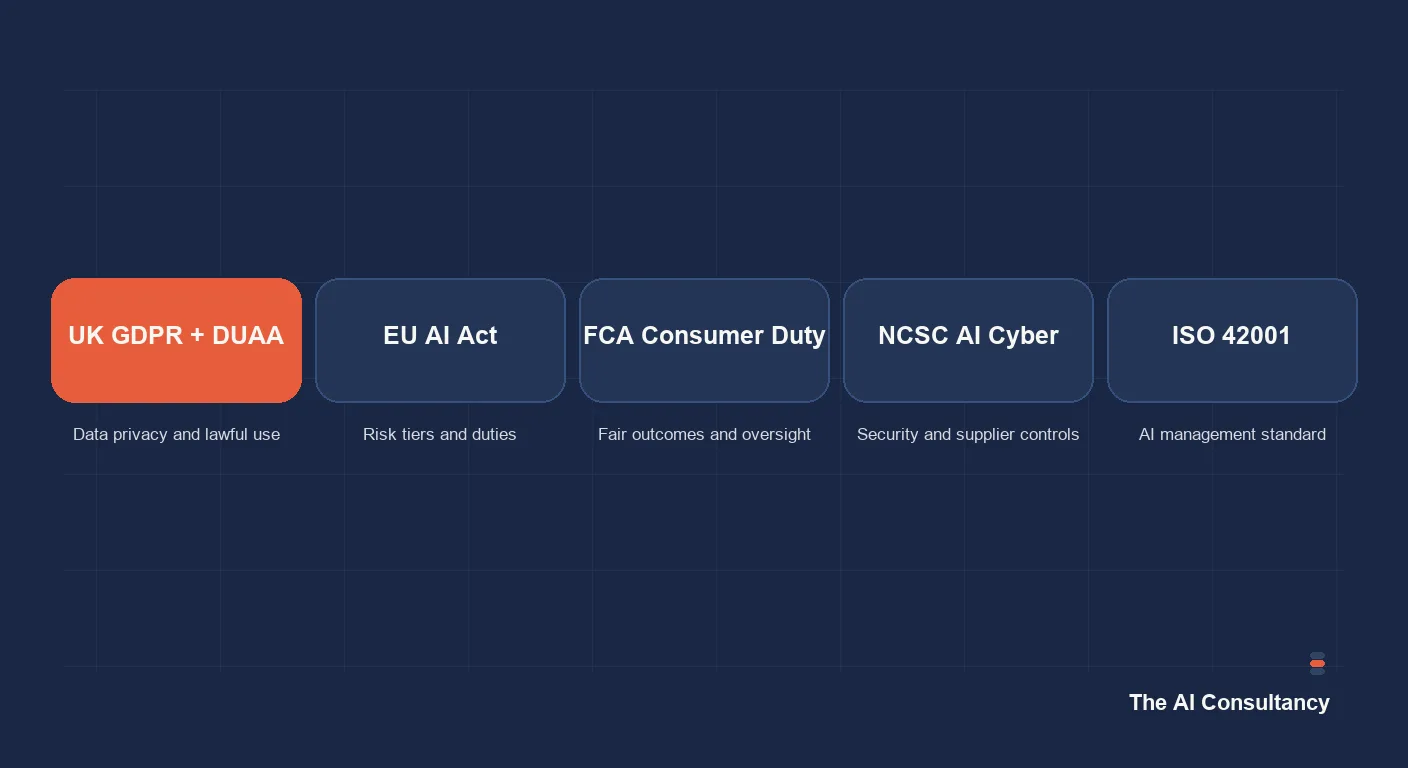

UK AI compliance is the set of regulatory obligations a UK business must meet when deploying AI systems, covering data protection, sector-specific rules, cybersecurity, and cross-border obligations. There is no single "UK AI Act" yet. Instead, UK businesses sit at the intersection of multiple overlapping frameworks: UK GDPR and the Data (Use and Access) Act 2025 (DUAA), the EU AI Act for businesses with EU exposure, sector rules from the FCA, ICO, MHRA, and CQC, the NCSC's April 2026 AI cyber guidance, and the voluntary ISO 42001 management system standard. The fragmentation is the problem. 84% of regulated UK financial services firms now have a named individual accountable for their AI approach. Most non-regulated UK SMEs do not, and that accountability gap is where the compliance risk lives in 2026.

This guide is a structured checklist. Section by section, it maps each regulatory framework to the obligations that apply, gives the documents that should be on file, and identifies the questions a senior leader should be able to answer if a client, auditor, or regulator asks. It is not a substitute for legal advice on any specific deployment, but it is the minimum baseline a UK business should hold before deploying AI in 2026.

The regulatory landscape in one page

The table below summarises which framework applies to whom and what the core obligation is. UK businesses are typically in scope of three or more rows simultaneously.

| Framework | Applies to | Core obligation |

|---|---|---|

| UK GDPR + Data (Use and Access) Act 2025 (DUAA) | All UK businesses processing personal data | Lawful basis, DPIA for high-risk AI, transparency |

| EU AI Act | UK businesses with EU exposure (customers, staff, market) | AI literacy (Article 4), high-risk system obligations, data governance (Article 10) |

| FCA Consumer Duty (PRIN 2A) | FCA-regulated firms | Explainability of AI used in pricing, eligibility, fraud, and customer-facing decisions |

| ICO guidance | All UK businesses processing personal data | Transparency, DPIA, subject rights including Article 22 |

| NCSC AI cyber guidance (April 2026) | All UK businesses deploying AI | Cyber Essentials baseline, supply chain security, prompt injection mitigation |

| ISO 42001 | Voluntary; relevant for enterprise buyers | AI management system certification |

The Data (Use and Access) Act 2025 (DUAA) is the most recent UK addition. It revises parts of UK GDPR and introduces specific provisions on automated decision-making and on data sharing across the public sector. For most UK SMEs, DUAA does not change the underlying obligations on AI but does change the language used in the privacy notice and the lawful basis register.

Checklist 1: UK GDPR and DUAA compliance for AI

The first compliance layer applies to every UK business that processes personal data through any AI tool, regardless of sector. Six items, each of which should produce a written artefact.

- Identify every AI system processing personal data. Maintain a register that lists each tool, the data categories it processes, and the lawful basis. ChatGPT Enterprise, Claude Team, Microsoft 365 Copilot, embedded AI in CRM and accounting platforms, and any custom builds all belong on this register.

- Confirm lawful basis for each. Legitimate interests, consent, contract, or legal obligation under Article 6 UK GDPR. For special category data, an Article 9 condition is also required.

- Complete a DPIA for any high-risk AI processing. The Information Commissioner's Office requires a Data Protection Impact Assessment for large-scale, systematic processing of personal data, special category data, or processing involving automated decisions with legal effects. Many AI use cases meet at least one of these triggers.

- Update privacy notices to disclose AI use where material. Where AI processes personal data in a way that is not obvious to the data subject, transparency requires it to be disclosed. Boilerplate AI clauses are often insufficient; the disclosure should describe what the AI does in plain English.

- Map subject rights to each AI system. Right of access, erasure, rectification, and (importantly for AI) the right to object to automated decisions under Article 22 UK GDPR.

- Document automated decision-making. Article 22 UK GDPR restricts solely automated decisions with legal or similarly significant effects. Document where any AI tool meets that threshold and what human-in-the-loop control applies.

Checklist 2: EU AI Act exposure

UK businesses are often unaware they fall within the EU AI Act's extraterritorial scope. The test is straightforward: do you have EU customers, EU staff, or do you place products on the EU market? If yes, the EU AI Act applies to your AI deployment, even though the UK is not an EU member state. Four obligations are live as of 2026.

- AI literacy training (Article 4). Enforceable since February 2025. Staff using AI systems must have appropriate training, documented per individual.

- Risk classification per AI system. Each deployed AI system should be classified as prohibited, high-risk, limited-risk, or minimal. Most UK SME deployments are limited-risk (chatbots, content generation) or minimal, but the classification should be on file.

- High-risk system obligations. If any system is high-risk under Annex III (employment, credit, education, law enforcement, critical infrastructure), the data governance requirements of Article 10, logging, human oversight, and transparency documentation all apply.

- Conformity assessment where required. High-risk systems require conformity assessment before being placed on the market or put into service.

For most UK SMEs, the practical takeaway is the literacy training obligation under Article 4 and the limited-risk transparency requirement that customer-facing chatbots disclose AI status to users.

Checklist 3: Sector-specific overlays

Each named sector adds an overlay on top of the cross-sector frameworks above. The list below covers the four sectors with the heaviest overlay in 2026.

Financial services (FCA)

Consumer Duty (PRIN 2A) requires explainability of AI used in pricing, eligibility, fraud, and customer-facing decisions. PRA operational resilience rules apply where AI is part of an important business service. The Senior Managers and Certification Regime (SM&CR) requires named accountability for AI decisions. 75% of FCA-regulated firms have adopted some form of AI, and 84% have an individual named accountable for the firm's AI approach.

Healthcare and dental

The Medicines and Healthcare products Regulatory Agency (MHRA) Medical Device Regulations apply where the AI is part of a clinical workflow. The NHS Digital Technology Assessment Criteria (DTAC) is required for any AI tool procured by NHS organisations. ICO healthcare sector guidance applies on top, with particular attention to special category data under Article 9 UK GDPR. Professional body guidance (GMC, GDC, NMC) on AI-assisted clinical decisions adds individual practitioner obligations.

Legal and accountancy

The Solicitors Regulation Authority (SRA) has issued AI disclosure principles where advice is AI-assisted. The Institute of Chartered Accountants in England and Wales (ICAEW) has published guidance on AI in audit. The Bar Standards Board issued AI guidance for barristers in 2025. Client confidentiality rules apply to AI inputs as much as to any other client information. The Financial Reporting Council (FRC) has published guidance on audit quality and AI for audit firms.

Public sector and education

The government's AI Playbook applies to central government bodies and is widely referenced by the wider public sector. Heightened transparency and fairness obligations sit on top of UK GDPR for public-sector deployments. Education-sector deployments touching pupil data add safeguarding considerations.

Checklist 4: AI cybersecurity baseline

The NCSC's April 2026 open letter to UK businesses sets a minimum AI cybersecurity baseline. Six items, each of which should be in place before any new AI deployment.

- Cyber Essentials certification current. Certified within the last 12 months, ideally Cyber Essentials Plus.

- Approved AI tool list in place. A documented list of AI tools sanctioned for use, along with the tier (free vs enterprise) and the data categories permitted.

- Multi-factor authentication on all AI tool accounts. No exceptions, including for executives.

- Phishing training updated for AI-generated threats. Voice cloning, deepfake video, and AI-drafted phishing emails require an updated training module.

- Prompt injection mitigations for customer-facing AI. Any chatbot or agent exposed to user input must treat retrieved or user-supplied content as untrusted and resist injected instructions.

- Out-of-band verification for financial instructions. Verbal callback or second-channel verification for any payment, payee change, or financial instruction received via AI-mediated channels.

For the deeper threat model and mitigation patterns that sit underneath this baseline, see our AI security risks guide for UK businesses.

Documentation that should be on file

Compliance is demonstrated through documentation. The following artefacts are the minimum a UK business should be able to produce on demand. If any of these does not exist, it is the first thing to build.

- Current AI acceptable use policy. See our AI acceptable use policy guide for UK SMEs.

- Approved AI tool list, with tier, data categories permitted, and review date.

- DPIA register for AI systems, with the screening assessment and full DPIAs where required.

- AI literacy training records (staff name, date, training delivered) per EU AI Act Article 4.

- Vendor Data Processing Agreements signed and on file, including the no-training clause for each enterprise tier.

- Risk register entries for each deployed AI system, with named owner.

- Privacy notice that discloses AI processing in plain English where material.

- Incident response plan that anticipates AI-specific incidents (data leakage to a vendor's training corpus, prompt injection, hallucinated outputs causing customer harm).

For the procurement-side controls that produce many of these artefacts, see our AI vendor selection and due diligence checklist.

ISO 42001: when is it worth it?

ISO 42001, published in 2023, is the international standard for AI management systems. It is voluntary and certification is not required by any UK regulator. The case for pursuing it in 2026 is commercial rather than regulatory: enterprise buyers, particularly in financial services, healthcare, and the public sector, increasingly require ISO 42001 certification (or equivalent) from AI vendors and from internal AI deployments that affect their data. For a UK SME selling AI-enabled services to enterprise clients, certification is moving from optional to expected over a 12 to 24 month horizon. For a UK SME using AI internally without selling AI-enabled services, the same outcomes can usually be achieved through ISO 27001 alignment plus the documentation list above, without the cost of formal ISO 42001 certification.

Who should be accountable?

The single most important compliance step is to name a person. The pattern that has worked in regulated UK financial services (84% of firms have a named individual accountable for AI) is generalisable to other sectors. The role does not need to be a new hire. In most UK SMEs, the natural owner is the existing data protection lead, the COO, or the head of IT. What matters is that responsibility is named, documented, and known to the senior team. A "shared" accountability across multiple functions is, in practice, no accountability at all.

Where to start

If your business does not yet have an AI compliance baseline, the priority order is: (1) name an accountable individual; (2) build the AI tool register; (3) write or update the AI acceptable use policy; (4) complete a DPIA screening for each tool on the register; (5) confirm vendor DPAs and no-training clauses are in place; (6) deliver AI literacy training and document it. Six steps, achievable in a quarter for a UK SME of typical size. For a structured assessment, see our AI strategy service. For broader strategy resources, see the AI strategy section of the Knowledge Hub. For the EU AI Act-specific obligations, see our EU AI Act guide for UK SMEs.

Frequently asked questions

- Does my UK SME need to comply with the EU AI Act?

- Yes if you have EU customers, EU staff, or place products on the EU market. The EU AI Act has extraterritorial scope and applies regardless of where the business is headquartered. The most commonly applicable obligations for UK SMEs are the AI literacy training requirement under Article 4 (enforceable since February 2025) and the transparency requirement that customer-facing chatbots disclose AI status to users. High-risk system obligations apply only if you operate a system listed in Annex III (employment, credit, education, law enforcement, critical infrastructure).

- Is a DPIA required for every AI tool we use?

- No. The Information Commissioner's Office requires a Data Protection Impact Assessment for high-risk processing: large-scale or systematic processing of personal data, special category data, or processing involving automated decisions with legal or similarly significant effects. Many AI tools (administrative AI, internal productivity tools that do not process personal data systematically) do not require a DPIA. Run a screening assessment for each AI system on your register and let that drive the DPIA decision.

- What is the difference between UK GDPR and the Data (Use and Access) Act 2025?

- The Data (Use and Access) Act 2025 (DUAA) revises parts of UK GDPR and introduces specific provisions on automated decision-making and on public sector data sharing. UK GDPR remains the foundational regime. For most UK businesses, DUAA does not fundamentally change the underlying compliance position on AI but does change the language used in privacy notices and the lawful basis register. Update your documentation to reflect DUAA where it applies, but do not expect a wholesale rewrite of your data protection programme.

- Do I need ISO 42001 certification?

- Not for any UK regulator. ISO 42001 is voluntary. The commercial case for certification depends on whether you sell AI-enabled services to enterprise clients in regulated sectors who increasingly require it. For a UK SME using AI only internally, ISO 27001 alignment plus the AI compliance documentation list (acceptable use policy, tool register, DPIA register, training records, vendor DPAs) usually achieves the same governance outcomes at lower cost.

- Who in my business should be accountable for AI compliance?

- Name a single accountable individual. In most UK SMEs, the natural owner is the existing data protection lead, the COO, or the head of IT. The role does not need to be a new hire. What matters is that responsibility is named, documented, and known to the senior team. 84% of UK FCA-regulated firms now have a named individual accountable for AI. Generalising that pattern to other sectors is the single most important compliance step a UK SME can take.