How to write an AI acceptable use policy: a template and guide for UK SMEs

Why a written AI acceptable use policy is the first line of defence

Across UK businesses in 2026, employees are using AI tools that their organisation has not vetted, approved, or integrated into its data governance processes. Industry reports describe this pattern as "shadow AI," and it is one of the fastest-growing compliance risks for UK SMEs. It introduces three categories of risk at the same time.

The first is UK GDPR breach. Personal data entered into a consumer-tier AI tool is processed by a third party without a lawful basis, without a data processing agreement in place, and often without any documented Data Protection Impact Assessment. The ICO does not treat "the employee did it informally" as a defence: the data controller is still the business. The second is intellectual property exposure. Proprietary information included in prompts may be used by the AI vendor to train future models, and once included, cannot be retrieved. The third is reputational risk. AI-generated content published without verification may include fabricated facts, inaccurate claims, or non-compliant regulatory statements.

An AI acceptable use policy is the first line of defence against all three. It is not a complex governance document. For most UK SMEs, a two-page policy covering approved tools, data classification, prohibited uses, verification requirements, and training is enough to materially reduce shadow AI risk and satisfy the EU AI Act's Article 4 literacy obligation where applicable. This guide sets out the five-step structure.

What an AI acceptable use policy covers

An AI acceptable use policy (AUP) defines which AI tools employees may use, what data they may process with those tools, and what verification steps apply before AI-generated content is used. It is distinct in scope from a broader IT acceptable use policy: an IT AUP covers device use, software installation, and network access, while an AI AUP specifically addresses AI tools, their data handling, and the human review obligations that apply to their outputs.

The policy should be written in two versions. A one-page summary version, accessible to all staff, covers the essential rules: which tools are approved, what data must never be entered, what outputs need human review, and what to do if an employee is unsure. A detailed version, for IT, compliance, and line managers, sets out the vendor assessment criteria, the data classification framework, the approval process for new tools, the acknowledgement and audit process, and the review cycle.

The policy covers both explicit AI tools (ChatGPT, Claude, Copilot, Gemini) and AI features embedded in other platforms (the AI summariser in Zoom or Teams, the AI features in HubSpot, the AI inside Microsoft 365). Employees often assume the AUP only applies to the headline tools, when in fact every AI-assisted feature raises the same data classification and verification questions.

Step 1: Define your approved tool list

UK businesses should maintain a whitelist of vetted AI tools rather than take an open "use anything" approach. A whitelist gives the business control over where data goes, what contractual protections are in place, and what the support channels are when something goes wrong.

The assessment criteria for approving a tool are consistent across vendors. First, data residency: where is data processed and stored? UK or EU residency is preferable for UK businesses, particularly for those with EU exposure. Second, model training policy: does the vendor use customer inputs to train future models? Enterprise and team tiers from major providers typically guarantee no training on customer data; free and consumer tiers often do not. Third, security certifications: ISO 27001, SOC 2 Type II, and Cyber Essentials are useful baselines. Fourth, the availability of a Data Processing Agreement (DPA) that can be signed and kept on file as evidence of a lawful processing relationship.

A practical tier structure looks like this:

- Approved for general business use: Microsoft Copilot (M365 enterprise), Google Gemini for Workspace (enterprise), Claude.ai Team or Enterprise, ChatGPT Enterprise. All offer a DPA, no-training guarantees on the relevant tier, and enterprise-grade security.

- Approved for internal data with caution: tools with a signed DPA, UK or EU data residency confirmed, and a documented no-training guarantee. Suitable for Tier 2 business data (see Step 2) but not for Tier 3 regulated or confidential data without specific authorisation.

- Not approved: consumer-tier tools where inputs may be used for training (free ChatGPT, free Claude.ai, free Gemini), unvetted third-party AI plugins, and any tool that cannot produce a DPA on request.

The approved tool list should be reviewed quarterly. New tools enter the landscape every month, and vendor terms change frequently. A tool that was approved in January may have updated its terms by April; the review cycle catches these changes before they create compliance exposure.

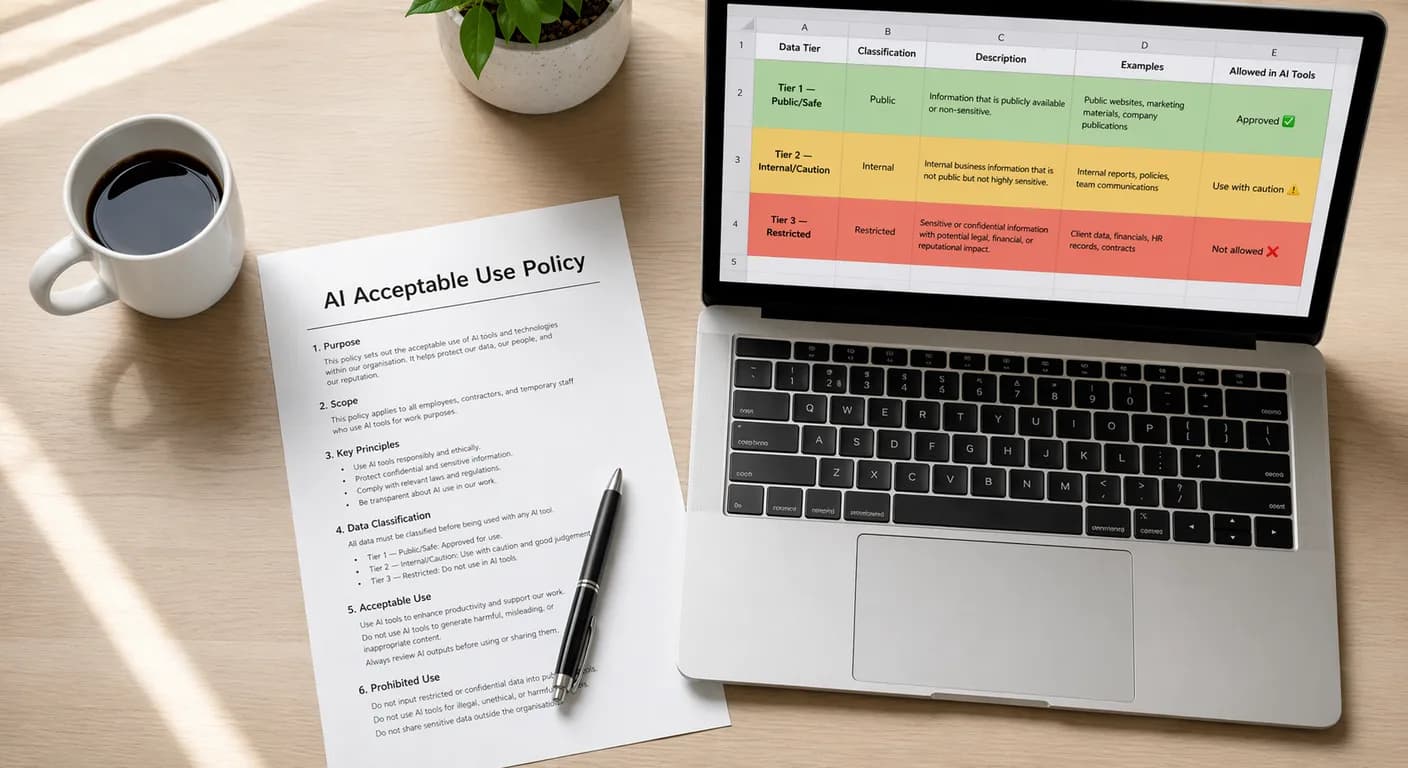

Step 2: Establish data classification rules

A data classification framework tells every employee, in clear language, what data can be entered into which tools. A three-tier system is simple, memorable, and sufficient for most UK SMEs.

Tier 1, Public/Safe. Publicly available information, anonymised data, general knowledge queries, and content with no personal or commercially sensitive information. Can be used with any approved tool, including consumer tiers where the employee has a legitimate business reason. Example: asking ChatGPT to summarise a published regulation, explain a concept, or draft a generic email template.

Tier 2, Internal. Non-confidential operational data, internal communications not subject to NDA, and business information that the organisation does not consider externally sensitive but does not wish to disclose publicly. Use only with enterprise-tier approved tools that have a signed DPA and a no-training guarantee. Example: drafting a project plan using internal meeting notes, summarising an internal report, or generating a slide deck outline.

Tier 3, Restricted/Do Not Use. Personal data as defined under UK GDPR, client-confidential information, financial data subject to audit, health records, and anything covered by a non-disclosure agreement. Do not include in any AI prompt without specific written authorisation and a documented DPIA completed for that use case. Example: customer records, employee files, draft contracts with live counterparty names, patient information.

The classification table should be visible at the point of use, not buried in a policy document. A printable one-page summary on the office wall, a pinned message in the team's Slack or Teams channel, or a short reminder at the top of each approved tool's URL all reduce the friction of applying the rules in practice.

Step 3: Define prohibited uses

Some uses of AI are always prohibited in a UK SME context, regardless of tool or data tier. Spelling these out explicitly in the policy removes ambiguity and gives line managers a clear basis for corrective action if a breach occurs.

A suggested prohibition list:

- Using AI to make final decisions on employment matters (hiring, performance, discipline, dismissal) without human review. Automated employment decisions are regulated under UK GDPR Article 22 and require an appropriate legal basis and human oversight.

- Generating content that will be published under a named individual's byline without that individual reviewing and approving the content before publication.

- Inputting Tier 3 data into any AI tool without specific authorisation from the designated data owner.

- Submitting client-confidential information to AI tools that are not covered by a DPA with that client's consent, or where the client's contract prohibits the use of AI on their data.

- Using AI to generate legal, medical, financial, or regulatory advice intended for a client without qualified professional review before delivery.

- Using AI to impersonate another person in text, audio, or video form without that person's explicit consent.

- Deploying AI-generated code to production without code review by a senior developer.

The National Cyber Security Centre (NCSC) has also issued specific warnings about prompt injection and related AI-enabled cyber attacks. Any use case that exposes an AI tool to untrusted external input (customer documents, inbound email attachments processed by AI, public web content) should include a prompt injection risk assessment before going live.

Step 4: Set output verification requirements

AI output quality varies by task, tool, and prompt. The policy should define which outputs require human review before use and at what level. A verification matrix makes this unambiguous.

| Output type | Minimum verification | Reviewer |

|---|---|---|

| Internal draft documents | Self-review | Author |

| External client communications | Peer or line manager review | Peer or manager |

| Published content (blog, social, web) | Editor review | Content owner or editor |

| Regulatory or legal submissions | Qualified professional sign-off | Solicitor or compliance lead |

| Financial statements and audit material | Accountant sign-off | Finance director or external accountant |

| AI-generated code to production | Code review | Senior developer |

| Recruitment and HR decisions | Human review of each case | Hiring manager or HR lead |

The matrix should be adjusted to reflect the specific business. A UK law firm will add mandatory partner review for any AI-drafted advice letter; a financial services firm will add FCA-regulated sign-off for anything that touches Consumer Duty outcomes; a healthcare provider will add clinical governance review for any AI output used in patient care. The principle is the same: verification effort should scale with the risk and the audience of the output.

Step 5: Training, acknowledgement, and audit

A policy that no one reads is not a policy. It is a document that exists in a folder. The final step is making the policy operational: training staff on it, capturing acknowledgement that they have read and understood it, and auditing compliance periodically.

Training should be short and practical. A single one-hour session covering the approved tool list, the data classification framework, the prohibited uses, and the verification matrix is enough for most staff. New joiners should receive the same training as part of induction. The training should include two or three real worked examples of common situations ("you want to summarise a draft contract," "you want to write a LinkedIn post about a client win," "a customer asks a question you want to research using ChatGPT") so that the rules are anchored in the actual decisions employees face.

Acknowledgement is a signed record that each employee has read and understood the policy. An e-signature via a form is sufficient. Annual re-acknowledgement captures policy changes and reminds staff of the rules. These records satisfy the documentation requirement under the EU AI Act's Article 4 literacy obligation, which has been enforceable since 2 February 2025 for UK businesses in scope.

Audit does not have to be elaborate. A shared spreadsheet logging which AI tools each team uses, reviewed quarterly, is sufficient for most SMEs to demonstrate that the business has visibility of its AI usage. When a new tool appears on the log that has not been through the approval process, the review catches it. The log also forms part of the evidence trail if a client, auditor, or regulator asks how the business governs its AI usage.

Further reading and services

An acceptable use policy works best when paired with a structured AI literacy programme that equips staff to apply it well. For the framework that maps staff capabilities to the right level of AI responsibility, see our 5 levels of AI literacy self-assessment. For strategic AI advice covering governance, compliance, and tooling decisions, see our AI strategy consulting. For more on building AI-ready teams, change management, and governance, see the AI training and capability building section of the Knowledge Hub.

Frequently asked questions

- Does my UK SME legally need an AI acceptable use policy?

- Not as a standalone UK-law requirement, but in practice yes. The EU AI Act's Article 4 literacy obligation, enforceable since 2 February 2025, requires documented AI training and records for UK businesses with EU exposure. UK GDPR Articles 5 and 32 require appropriate organisational measures to protect personal data, which includes knowing what AI tools handle that data. An AUP is the practical document that satisfies both, and it is often the first item an auditor or enterprise customer will ask to see.

- What is "shadow AI" and why is it a compliance risk?

- Shadow AI is the use of AI tools inside a business without the organisation's knowledge, approval, or oversight. It typically happens when an employee signs up for a consumer-tier AI tool to help with their work. The compliance risk is that the business becomes responsible as data controller for any personal data entered into that tool, without a DPA, without a lawful basis documented, and without a DPIA. Shadow AI is also a confidentiality risk: proprietary or client-confidential material may be used by the AI vendor to train future models.

- Can employees use the free version of ChatGPT for work tasks?

- Only for Tier 1 (Public/Safe) tasks where no personal data, no client-confidential material, and no commercially sensitive information is included in the prompt. Free-tier tools typically do not offer a DPA, do not guarantee no training on inputs, and do not meet the data processing standards UK GDPR requires for internal or restricted data. For any work involving Tier 2 or Tier 3 data, the business should use an enterprise-tier tool with a signed DPA.

- How often should an AI acceptable use policy be reviewed?

- Quarterly for the first year, then at least annually once the policy is stable. Quarterly review catches rapid changes in the AI tool landscape: new tools, updated vendor terms, new features, and new regulatory guidance. After the first year, annual review is typically sufficient, but any significant change (a new high-risk use case, new regulator guidance, or a near-miss incident) should trigger an immediate review.

- What happens if an employee breaches an AI acceptable use policy?

- The response depends on the nature and severity of the breach. Minor breaches, such as using a non-approved tool for a Tier 1 task, should be addressed through reminder and retraining. Serious breaches involving Tier 3 data, deliberate circumvention of the policy, or failure to declare a breach after discovery should follow the business's standard disciplinary process. Any breach involving personal data may also trigger UK GDPR breach notification obligations under Article 33, and the business should have a process for assessing and reporting such breaches within the 72-hour window.