The Vibe Coding Hangover — What UK Founders Are Discovering in 2026

The pattern is consistent enough to have a name

The sequence has become recognisable enough across the AI-builder community that founders describe it without prompting, in the same terms, in forum posts, in Indie Hackers threads, and in conversations with engineers brought in to assess the damage.

It goes like this. Week one: you build something with Bolt.new, Lovable, or Replit that exceeds everything you thought was possible without engineering skills. The speed is extraordinary. The prototype demonstrates the entire product concept, handles real data, and shows a polished interface. You share it with potential customers. The response is positive. Week two or three: you attempt to deploy the application to a real domain for real users, or to show it to an investor, or to give it to a client. Something breaks. The AI suggests a fix. The fix breaks something else. Week four: you are still trying to fix the same category of error. The codebase now has patches on patches. You have spent as much on AI tool subscriptions and API credits as you would have spent hiring a junior developer for a week. You do not know if what you have built is fixable.

The Indie Hackers community has a specific phrase for the state at week four: the credit limit wall. Not the literal credit limit of the AI tool — though that is sometimes reached too — but the point at which further prompting produces negative returns, where each session makes the net situation worse rather than better.

Why the prototype always works

The prototype works because the tool's environment is set up to make it work.

Bolt.new, Lovable, and Replit each maintain a controlled environment in which your application runs. Environment variables are pre-configured. Domain settings match the tool's own domain. CORS is permissive. Database access is either open or managed by the tool. Package resolution is handled by the tool's own runtime. The application succeeds in this environment because the environment has been designed to produce that success.

When you move the application out of that environment — to a custom domain, to Vercel, to a real production database with real security requirements — the scaffolding stays behind. The application now has to stand on its own. In many cases, it cannot, because the scaffolding was load-bearing and no one knew.

This is not a criticism of the tools. It is a description of their design priority. They are optimised for rapid prototype delivery. The production step requires different expertise from the prototype step, and neither the tool nor the AI inside it has been asked to provide it.

The circular fix loop

The most damaging phase of the vibe coding hangover is the circular fix loop — the period in which a founder continues to prompt the AI to fix the production issue rather than bringing in a human engineer.

The loop has a specific dynamic. The AI fixes the visible symptom. The fix introduces a regression in a different part of the codebase. The founder prompts the AI to fix the regression. A new regression appears. The cycle continues. The codebase accumulates patches. The AI has less and less reliable context for the full codebase as it grows and branches. Each session begins with an AI that does not remember the previous session and has to re-read a codebase that has been modified by an AI that did not have the full picture.

This is not a failure of the AI model's capability. It is a structural limitation of the context-window approach to codebase modification. The AI can only reason about the code it can read in the current session. A complex, iteratively-modified codebase eventually exceeds what a single session can hold coherently. Human architectural oversight — someone who can hold the full system in their head, see the interactions between components, and make decisions about structure — is what the circular fix loop lacks.

What UK founders are discovering at month three

In 2025 and 2026, enough UK founders have gone through the full cycle — prototype, hangover, assessment, remediation — to describe what they found at month three with some precision.

The most common discoveries, roughly in order of frequency:

- The security exposure was real and was already being exploited. Permissive Supabase RLS configurations, exposed API keys, and authentication gaps are not theoretical risks. In several documented cases, founders discovered during a security audit that the exposure had already been accessed — users' data had been reachable to any authenticated user for weeks before the issue was found.

- The codebase had regressions in components that appeared to be working. The circular fix loop introduces regressions throughout the codebase, not just in the components being actively fixed. A security audit of a month-three codebase often reveals failures in components the founder had considered stable — features that worked two weeks ago and have since been broken by a fix elsewhere.

- The database schema did not match the application's actual data requirements. Tables created iteratively through AI prompting tend to accumulate fields that are no longer used, lack fields that are needed, and have relationships that are implied by the application logic but not enforced at the database level. This is a refactoring problem rather than a security problem, but it is a significant technical debt that compounds every data migration.

- The UK GDPR position had not been considered at any point in the build. Privacy policy, cookie consent, lawful basis documentation, and data subject rights — standard requirements for any application handling personal data in the UK — are not generated by AI coding tools. Many founders discovered they had been processing user data without a lawful basis since the first user signed up.

- The fix would have been cheaper three weeks earlier. The circular fix loop compounds cost. Each week of additional AI-prompted fixing increases the technical debt in the codebase, increases the engineering time required for the subsequent assessment, and increases the likelihood that the application requires a refactor rather than a fix. Founders consistently report that the cost of the eventual remediation would have been lower, and the stress significantly lower, if the assessment had happened at week two rather than week eight.

The most productive response at any stage

If you are at week one and the prototype works, the productive response is to get an engineering assessment before attempting deployment. Not an extended prompting session, not self-directed debugging — a structured review of what the tool has produced and what it will require to be production-safe. The £495 Diagnostic Audit exists for exactly this moment. It is the correct step between "prototype works" and "launch."

If you are at week four with a circular fix loop, the productive response is to stop prompting. Every additional prompting session at this stage increases the technical debt without a proportionate reduction in the actual problems. The circular fix loop is the signal that the remaining issues are beyond the tool's ability to resolve without human engineering oversight. The diagnostic audit is still the correct next step — it tells you what the actual state of the codebase is and what the correct remediation path is, regardless of how the loop got started.

If you are at week eight and do not know whether what you have built is fixable, the diagnostic audit is still the correct first step. It is faster and cheaper to know what the situation actually is than to continue operating under uncertainty. The report takes 3 to 5 working days and tells you exactly what the correct path forward is, with a scoped quote attached. Whatever that path is, knowing it is better than not knowing.

What the vibe coding hangover is not

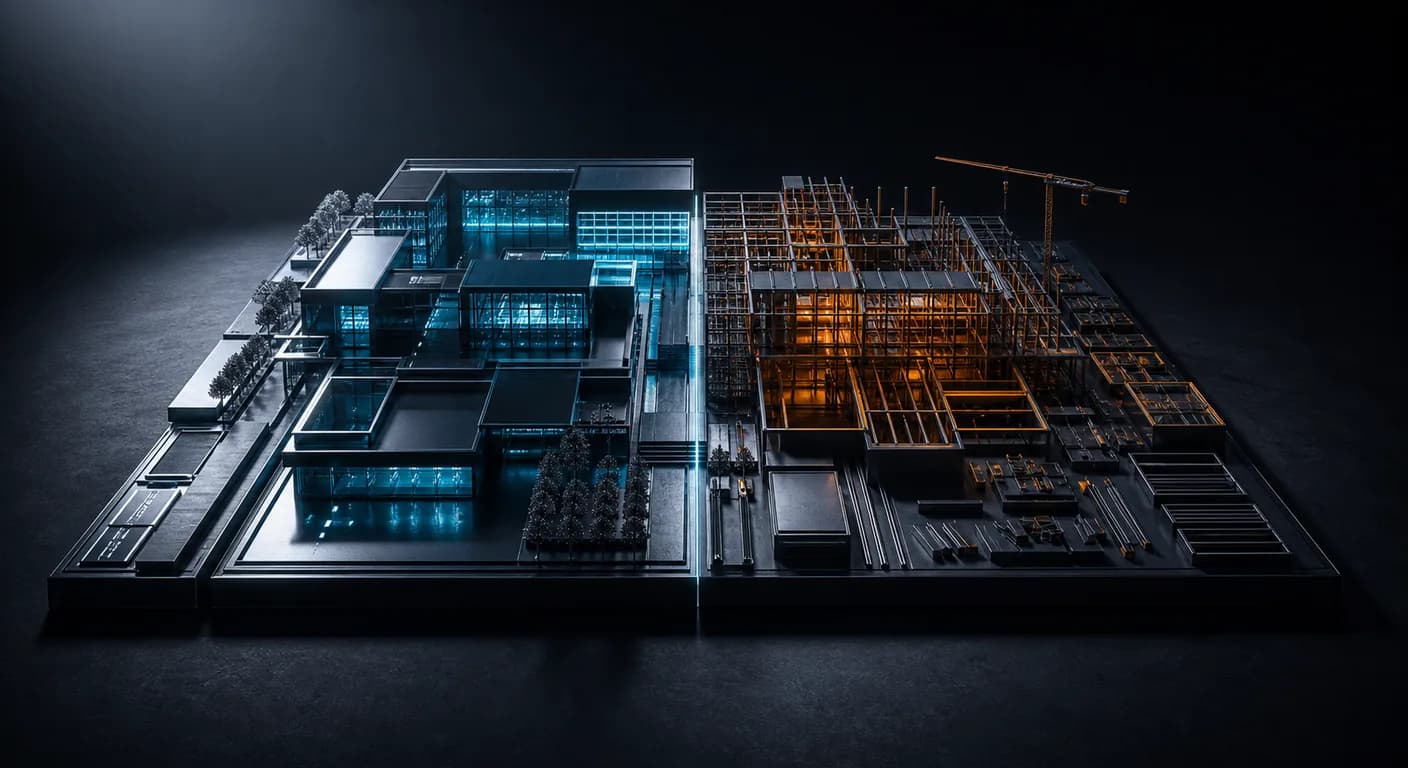

The vibe coding hangover is not evidence that vibe coding is a mistake, or that AI coding tools are unsuitable for building real applications. The tools produce genuine value — the prototype that would have taken a development agency six weeks and £30,000 now takes one founder one week and £200 in API credits. That is a real and permanent shift in what is possible.

The hangover is evidence that the prototype step and the production step require different skills, and that the tools are excellent at the first and structurally limited on the second. A qualified engineer who can assess the output, identify the gaps, and close them — using AI tools themselves where appropriate for the implementation work — is what the production step requires. The question is not whether to use AI coding tools; it is when to bring in the engineering judgement that the tools themselves cannot provide.

The AI App Production Clinic is designed for exactly that moment: after the prototype, before the production launch, with an independent engineering assessment that tells you what you are actually dealing with and what it will take to get there safely.

Frequently asked questions

- How do I know if I am in a circular fix loop?

- You are in a circular fix loop if: you have been prompting the same AI tool to fix the same category of error for more than five sessions without resolution; each fix introduces a regression in a different part of the application; you cannot predict what a change will affect; and further prompting feels like it is making the overall situation worse rather than better.

- Is it too late to get an assessment if I have already been in the fix loop for weeks?

- No. The assessment is useful at any stage. The earlier it happens, the lower the total cost — both because the technical debt is smaller and because the assessment can prevent further compounding. But an assessment at week eight is still better than discovering the issues through a security breach or investor due diligence.

- Can I use the same AI tool to assess its own output for security issues?

- The AI tool can identify some issues in the code it can read in the current session. It cannot reliably assess the security of the full deployed application, the correctness of the RLS policies relative to the intended data model, the GDPR compliance position, or the structural integrity of a codebase it has been iteratively modifying without full context. An independent review by a human engineer is the only reliable pre-production security assessment.

- What is the right moment to bring in an engineer?

- The right moment is after the prototype works and before the first real user interacts with it. If you have already passed that point, the right moment is now.