AI hallucinations and bias: a manager's guide for UK businesses

Why hallucinations and bias are process problems, not tool problems

Large language models do not retrieve facts from a database. They predict the most statistically plausible next word based on patterns in training data. This architecture produces remarkably fluent output, and it also produces confident fabrications that are indistinguishable from accurate output without independent verification. Research on commercial LLMs reports hallucination rates across models ranging from 15% to 52%. Domain-specific research on legal queries puts the rate at 58% to 88% in some scenarios. This is not a bug that a future software patch will fix. It is a property of how large language models work. Managing it requires process, not optimism.

Bias is a separate but related problem. Where hallucination produces an individual wrong answer, bias produces systematic skew across many answers, reflecting imbalances in the training data. For managers deploying AI tools in UK businesses, the two risks require different mitigations and different governance responses.

This guide is written for operations managers, team leads, and compliance officers. It is not written for engineers. The objective is to give you a working mental model of where AI tools fail, what the highest-risk use cases look like in a UK business context, and what verification workflows actually work in practice.

What a hallucination actually is

An AI hallucination is a confident, plausible-sounding output from a language model that is factually incorrect or entirely fabricated. The output looks just like a correct answer. It is grammatically fluent, internally consistent, and delivered with the same tone as accurate output. This is what makes hallucinations difficult to spot without independent verification.

Concrete examples that UK businesses encounter in practice:

- An AI invents a UK statute or regulation that does not exist, or cites a real statute with the wrong clause number.

- An AI fabricates a quote and attributes it to a named person, often a well-known executive or government figure.

- An AI generates a market size figure with false precision (for example, "£4.3 billion in 2024"), where the real figure has never been published.

- An AI invents a company name, case study, or testimonial that has no basis in any source.

- An AI describes a software feature that does not exist in the product it is summarising.

The risk is proportional to how much trust is placed in the output without verification. For internal drafting with human review, hallucinations are a nuisance that a competent reviewer catches. For client-facing documents reviewed only by the person who prompted the AI, hallucinations are a liability. Once a fabricated statistic is in a proposal, a signed report, or a published article, the business is accountable for it.

What bias means in an AI context

Bias is distinct from hallucination. Where hallucination is a single wrong answer, bias is a systematic pattern across many answers, and it reflects imbalances in the training data the model learned from.

Practical examples that show up in UK business use:

- A recruitment AI that consistently ranks candidates with Russell Group university names higher than candidates with equally strong credentials from other institutions.

- A credit-scoring AI that produces different risk scores for demographically similar applicants because of subtle correlations with postcode, name, or employment history.

- A customer service AI that uses different vocabulary or tone depending on the inferred gender or age of the person it is responding to.

- A content generation AI that defaults to male pronouns for technical roles and female pronouns for administrative roles.

For FCA-regulated firms, bias is not only an ethical issue. It is a regulatory one. FCA Consumer Duty (PRIN 2A) requires firms to deliver good outcomes for retail customers, and AI used in pricing, eligibility, fraud detection, or complaints handling must be auditable and demonstrably fair. If a UK financial services firm cannot explain how its AI made a decision, or cannot demonstrate that the decision did not rely on protected characteristics, it has a supervisory problem regardless of whether a complaint has been raised.

ISO 42001, the AI management system standard published in December 2023, is emerging as the practical framework for managing these risks. It does not prescribe specific technical tests; it requires an organisation to have a documented management system covering AI policy, risk assessment, data governance, and continual improvement. Holding ISO 42001 certification demonstrates governance maturity and reduces the compliance workload under the EU AI Act for firms in scope.

The four main causes of hallucinations

Understanding why hallucinations happen helps predict where a tool is most likely to fail. There are four main causes.

- Training data cutoffs. A model has no knowledge of events after its training date. If you ask about a regulation that changed last month, the model may fabricate a version of the new rule rather than admit it does not know. Cutoff-driven hallucinations are especially common in fast-moving domains: tax, employment law, cyber security, and market data.

- Knowledge gaps. A model has limited training data in specialist domains, rare topics, or non-English sources. Ask about a niche UK regulatory sub-sector, a small-cap company, or a specialist technical term, and the model is likely to fill the gap with plausible-sounding invention rather than flag the gap.

- Conflicting information. When training data contains conflicting facts, the model averages between them. The result is an answer that sounds authoritative but does not match any single source. This is common in areas where practice has changed over time, or where different jurisdictions have different rules (UK vs EU vs US).

- Context loss. In long conversations, a model can lose track of constraints given earlier. A rule set at the start of the conversation ("do not include pricing figures") may be forgotten 20 messages later. This is a design limitation of current architectures, not a bug.

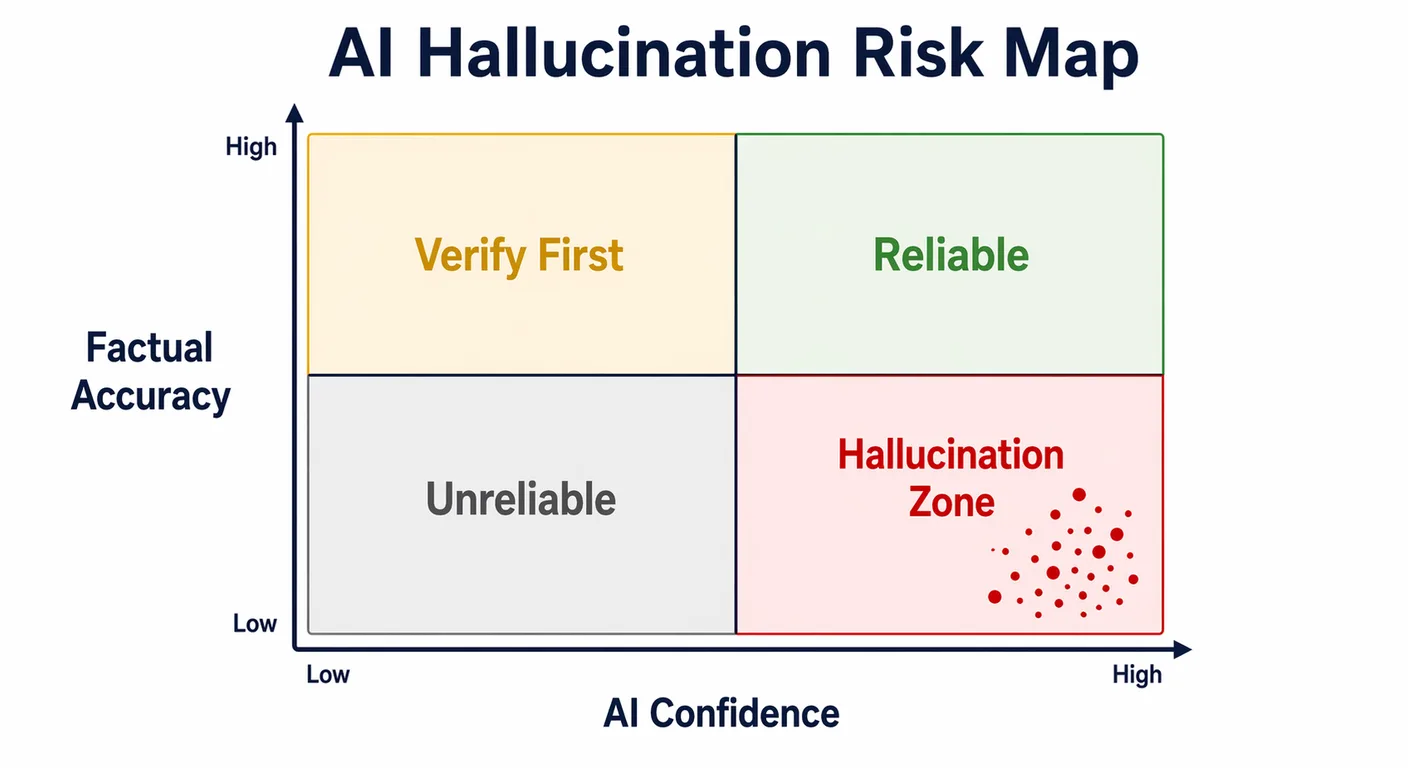

For managers, the takeaway is that different tasks carry different hallucination risk. Summarising a document the model can read in context is low risk. Asking the model to recall specific facts from its training is much higher risk. Prompting the model to write about a topic it has limited training on is highest risk.

High-risk use cases for UK businesses

Not all AI use cases carry equal risk. The table below sets out the highest-risk categories in a typical UK business and the minimum verification each requires.

| Use case | Hallucination risk | Bias risk | Minimum verification |

|---|---|---|---|

| Legal document drafting | Very high | Low | Qualified solicitor review |

| Financial reporting | High | Low | Accountant sign-off |

| Recruitment shortlisting | Low | High | HR audit of decisions |

| Client-facing content | Medium | Medium | Editor review |

| Internal meeting summaries | Low | Low | Author sense-check |

| Data entry automation | Medium | Low | Exception-based audit |

Two patterns stand out. First, legal and financial drafting are high on hallucination risk but low on bias, because they involve specific factual claims (citations, numbers, clause references) rather than judgements about people. Second, recruitment and HR use cases are low on hallucination but high on bias, because the model is reflecting demographic patterns in its training data rather than inventing facts.

The verification requirement should match the risk. A qualified solicitor should sign off AI-drafted legal advice before it reaches a client. An HR lead should audit the outcomes of AI-assisted shortlisting at regular intervals to check for demographic drift. These are not optional steps; they are the control that makes AI use defensible.

Mitigation: retrieval-augmented generation (RAG)

Retrieval-augmented generation is the most effective technical mitigation for hallucination in domain-specific tasks. Rather than relying on what the model learned in training, a RAG system grounds the model's response in a verified knowledge base that the business controls. When the user asks a question, the system first retrieves relevant documents from the knowledge base, then generates a response based on that retrieved content.

For a UK business, RAG is appropriate for several common use cases: internal policy Q&A (the AI answers questions about the company's own policies from the policy documents, not from its general training), document review (the AI summarises or answers questions about a specific contract the user has uploaded), and customer support (the AI answers customer questions from a vetted knowledge base rather than from its general training).

RAG is not a panacea. Its quality depends entirely on the quality and structure of the knowledge base it retrieves from. A RAG system pointed at a disorganised shared drive with duplicate, outdated, and contradictory documents will produce disorganised, outdated, and contradictory answers. Before any RAG deployment, the underlying data must be audited, cleansed, structured, and governed. This is covered in detail in our guide on data preparation for AI.

Mitigation: prompt constraints and output review workflows

Technical mitigation works best alongside process mitigation. Several practical techniques reduce hallucination risk in general AI use:

- Permit "I don't know." Explicitly instruct the model to say it does not know if the answer is not in the provided context. Without this instruction, the model defaults to answering confidently regardless of its knowledge.

- Require citations. Ask the model to cite the specific source document or section for each factual claim. Hallucinations usually fail this test because the model cannot cite a real source for a fabrication.

- Verify figures. Set a policy that any AI-generated figure used in a client document must be independently verified against a primary source before the document is sent.

- Implement a human-in-the-loop checkpoint. For any high-stakes AI output (legal, financial, regulatory, client-facing), a named human must review the content before it is used. The checkpoint is not optional and cannot be delegated to the person who generated the prompt.

None of these techniques eliminates hallucination. They make the verification step faster and more reliable. The combination of a controlled knowledge base (RAG where appropriate), prompt techniques that surface uncertainty, and a documented review workflow is what turns AI from a risk into a tool.

Further reading and services

Hallucination and bias management sits alongside broader governance and literacy work. For the framework that maps staff capabilities to the right level of AI responsibility, see our 5 levels of AI literacy self-assessment. For strategic advice on AI governance, risk, and regulatory alignment, see our AI strategy consulting. For support designing and deploying RAG systems and AI-enabled workflows with the right verification controls, see our AI implementation service. For more on building AI-ready teams, see the AI training and capability building section of the Knowledge Hub.

Frequently asked questions

- Can AI hallucinations be completely eliminated?

- No. Research on commercial LLMs reports hallucination rates ranging from 15% to 52% across models, and in specialist domains such as legal queries, domain-specific research has reported rates of 58% to 88%. Hallucination is a property of how language models work, not a bug that a future patch will eliminate. It can be substantially reduced through retrieval-augmented generation, structured prompts, and required citations, but it cannot be removed entirely. Human review remains essential for any high-stakes output.

- How do I know if an AI tool I am using produces biased outputs?

- Run structured tests. For recruitment tools, submit a set of CVs with matched credentials but varied demographic indicators (name, university, postcode) and compare the outputs. For customer-facing tools, run parallel queries with different inferred user profiles and check for differences in tone, content, or recommendation. Track outcomes over time (for example, demographic patterns in shortlisted candidates) and review at regular intervals. Vendors of enterprise AI tools should also be able to provide bias testing documentation on request.

- Is a business liable for errors in AI-generated content sent to clients?

- Yes. The business is liable for what it publishes and delivers to clients, regardless of whether the content was written by a human, AI, or both. "The AI said so" is not a defence. For FCA-regulated firms, Consumer Duty (PRIN 2A) adds a specific requirement that decisions affecting retail customers must be demonstrably fair and explainable. For all UK businesses, UK GDPR Article 22 applies where AI is making or substantially influencing decisions that have legal or significant effects on individuals.

- What is RAG and does it fix the hallucination problem?

- Retrieval-augmented generation (RAG) is a technique that grounds an AI's response in a verified knowledge base controlled by the business, rather than relying on what the model learned in training. It substantially reduces hallucination rates for domain-specific queries because the model is answering from retrieved documents rather than from memory. It does not eliminate hallucination entirely, and its quality depends entirely on the quality of the underlying knowledge base. Poor data in means poor answers out.

- Does the FCA require UK financial services firms to test AI tools for bias?

- FCA Consumer Duty (PRIN 2A) requires firms to deliver good outcomes for retail customers, which includes ensuring AI-driven decisions do not produce unfair or discriminatory results. The FCA does not prescribe specific bias testing methods, but firms using AI in pricing, eligibility, fraud detection, or complaints handling should maintain documented evidence that they have assessed and are monitoring their AI systems for bias. ISO 42001 provides a framework for this, and alignment with it is a reasonable supervisory expectation.