AI change management: why employees resist new tools and how to improve adoption in UK businesses

Why AI deployment is a change management problem, not a technology problem

AI change management is the structured process of preparing, supporting, and guiding employees through the adoption of AI tools, addressing the human and organisational barriers to effective use. It is distinct from technology deployment, which is the easy part. Research cited in industry reporting by Forbes in February 2026 suggests that up to 95% of enterprise AI pilots fail to deliver measurable business returns, and change management failure is consistently identified as the primary cause. The pattern is familiar to anyone who has been through one of these rollouts: leadership approves the tool, IT deploys it, a training session is held, and within two months usage has dropped to a handful of enthusiasts. The tool was fine. The deployment was not.

UK-specific data reinforces the pattern. The Department for Science, Innovation and Technology (February 2026) identifies the skills gap as the most commonly cited barrier to UK AI adoption, alongside "no identified need." Both are change management failures. A skills gap is a training problem. "No identified need" means the business case was not communicated in a way employees could connect to their own work. This article sets out a practical framework for UK businesses that addresses the real causes of low AI adoption rather than the symptoms.

Why employees resist AI tools

Employee resistance to AI is rarely what it appears on the surface. It is easy to assume people resist because they fear job loss, and while that concern exists, it is not the primary driver in most UK businesses. The real reasons are more practical and more fixable.

Four causes account for most resistance. First, employees were not involved in the tool selection and do not understand why this tool was chosen over alternatives. Second, the tool requires more effort than their current approach in the short term: learning new prompts, new workflows, and new verification habits takes time before it pays back. Third, they are uncertain what "using AI correctly" looks like and do not want to make mistakes in front of colleagues or managers, especially in regulated environments where a bad AI output could have consequences. Fourth, they tried it once, got a poor result, and concluded the tool does not work for their job.

Each cause requires a different response. Involvement-driven resistance is solved by consulting representative users before the procurement decision. Effort-driven resistance is solved by prompt libraries, templates, and worked examples that reduce the time cost of learning. Uncertainty-driven resistance is solved by explicit permission to experiment, paired with a safe space (an internal Slack channel, a weekly drop-in) to ask questions without judgement. Experience-driven resistance is solved by paired use: someone who has already succeeded with the tool sits with the sceptic and runs through a real task together, producing a usable output the sceptic can then build on.

The five-stage adoption curve for AI tools

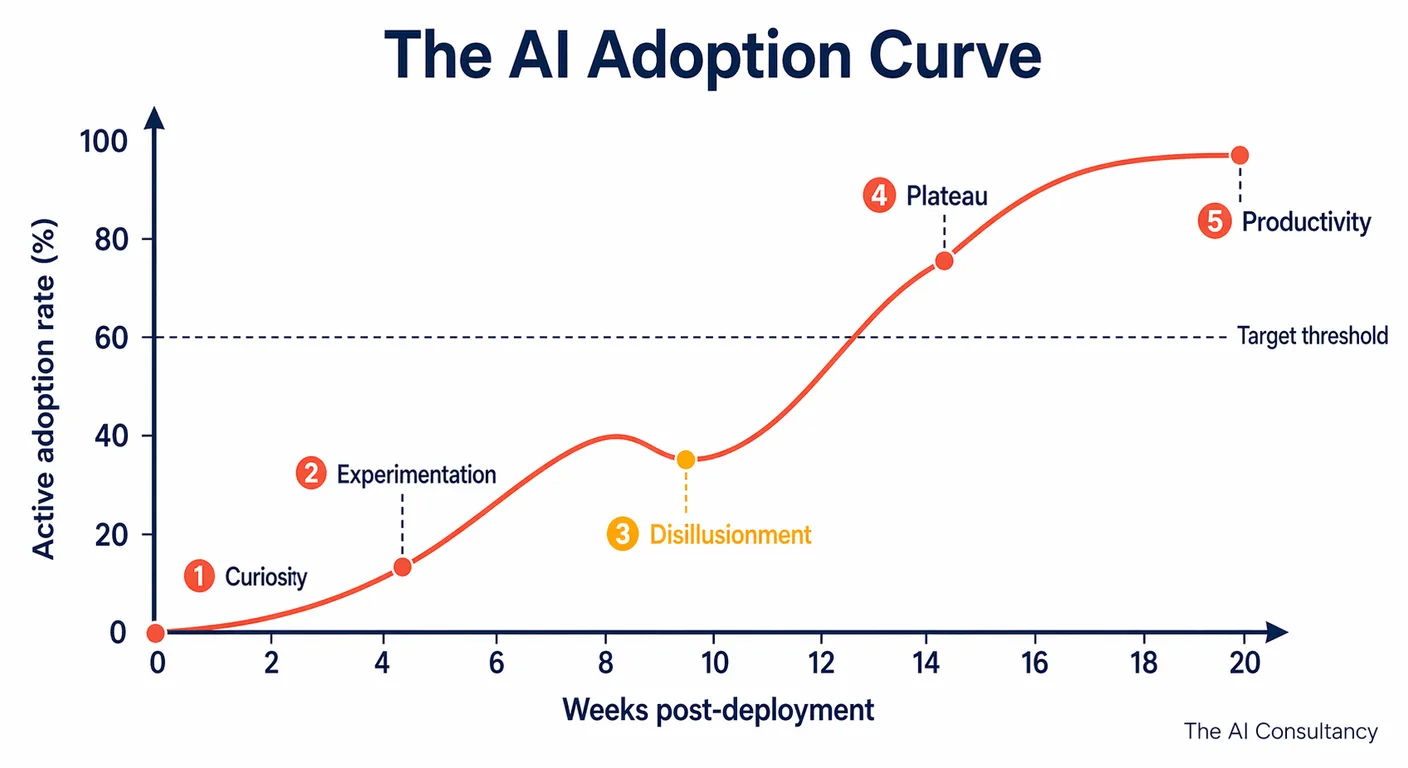

AI adoption follows a predictable five-stage curve, adapted from the Gartner hype cycle but compressed to the timescales of a single-tool rollout in a UK SME.

- Announcement and initial curiosity. Senior leadership announces the tool, there is a flurry of LinkedIn posts and internal Slack interest, and a small number of enthusiasts log in on day one.

- Experimentation by early adopters. The enthusiasts produce interesting results, share them informally with colleagues, and generate peer curiosity. Usage widens but remains voluntary and exploratory.

- Disillusionment. The tool does not meet the inflated expectations set by the announcement. Users hit a bad output, a confidentiality concern, or a use case it cannot handle well. Enthusiasm cools. This stage is where most deployments stall without intervention.

- Plateau. Usage falls to a small group of enthusiasts who have found specific, reliable use cases. The rest of the team has quietly reverted to the previous workflow. Leadership begins to question the value.

- Productivity. With structured intervention between stages 3 and 4, the tool enters a stable productivity phase: specific use cases, consistent results, sustainable adoption, and measurable time savings that show up in operational metrics.

Most UK businesses that deploy AI tools without change management never move past stage 4. The intervention that moves a deployment from plateau to productivity is rarely more training. It is deliberate use-case selection, prompt templates for the highest-value tasks, and a peer-support model. Understanding where your organisation is on this curve tells you which intervention is needed. A team in stage 2 needs space to experiment. A team in stage 3 needs concrete use cases and templates. A team in stage 4 needs a focused relaunch with a narrower scope and a named owner.

Communication: what to say before deployment

Pre-deployment communication is the single highest-leverage change management activity. Three questions need clear, specific answers before any AI tool is announced. These answers must come from line managers, not just IT or senior leadership, because the relationship employees have with their own manager shapes how they interpret the rollout.

Why this tool? Not "AI is important." Specific: this tool, for these tasks, chosen for these reasons over alternatives. For example: "We chose Microsoft Copilot because it is already part of our M365 licence, it works directly in the apps you already use, and the data stays within our existing Microsoft 365 boundary. We evaluated Claude Team and ChatGPT Team as alternatives; Copilot won on integration depth and cost."

How will it affect my job? Honest and role-specific. "For sales, Copilot will draft meeting follow-ups and summarise call notes. You will still own the customer relationship. For operations, Copilot will summarise incoming supplier emails and flag items that need escalation. You will still own the decisions." If time will be saved, say explicitly what that time will be reinvested in. If the tool is expected to change the job materially, say that too.

What do I need to do differently starting on day one? Concrete and narrow. Not "start using AI." Something like: "Starting Monday, draft your first response to any new lead in Copilot before editing. Use the shared prompt template in the team Slack. Log any issues in the feedback form." The sharper the day-one instruction, the faster the habit forms.

What not to say: do not describe the tool as "a way to do your job faster" if the time saved will not be reinvested in the employee. Staff interpret this phrase as a headcount-reduction signal even when no redundancy is planned. If productivity is the goal, say what the freed-up time will be spent on: deeper customer relationships, higher-value work, more training, fewer late nights.

The AI champions model

The AI champions model identifies two to four individuals per department who become the peer support network for a new tool. Champions receive early access, deeper training, and explicit permission to experiment. Their role is not to evangelise. It is to build practical knowledge and be informally available to answer peer questions as they come up.

Peer-to-peer knowledge transfer is significantly more effective than formal training for AI adoption, because the questions employees ask in the first few weeks are usually small, contextual, and embarrassing enough that they will not send them to IT or raise them in a training session. "How do I phrase this prompt so it stops giving me generic answers?" is a question asked of a colleague, not a helpdesk.

Champion selection is not about seniority or technical skill. The right champions are selected on two criteria: enthusiasm for the tool, and peer credibility in their department. A senior person with low peer credibility is a worse champion than a mid-level person whose colleagues already ask them for help with Excel. Aim for champions at Level 3 on the AI literacy framework. For more on assessing and building internal AI capability by role, see our 5-level AI literacy framework.

Champions should meet briefly (30 minutes is usually enough) every two weeks across the first three months of a rollout, share what is working and what is not, build a shared prompt library, and flag emerging patterns to leadership. This meeting is the steering mechanism for the rollout. Without it, champions become isolated enthusiasts and the programme loses its peer layer.

The iterative rollout: starting small and scaling deliberately

A tightly defined pilot before a full rollout is the single biggest predictor of long-term adoption. The pilot has three components: a defined group (5 to 15 people across one or two departments), one or two specific use cases with measurable outcomes, and a 6 to 8 week duration. Any one of these missing and the pilot produces inconclusive data.

Two things are measured in the pilot, not five or ten. First, adoption rate: what percentage of the pilot group is using the tool at least twice per week by week 6? Second, output quality: are the AI-assisted outputs better, faster, or both than the pre-AI baseline for the same task? Everything else (satisfaction surveys, feature wish-lists, NPS) is noise at pilot stage and can wait.

If adoption rate is below 60% at week 6, do not scale. Diagnose first. Four common diagnoses apply to UK SME pilots. The use case is wrong: the specific task does not benefit from AI in the way that was assumed. The prompt templates are too generic: staff are having to redo the prompt work themselves and drop back to manual. The training was insufficient: an hour of introduction is rarely enough for most users to move past basic use. There is a management signal that AI use is discouraged: often unintentional, but a manager who never uses the tool in a team meeting sends the signal that it is optional, and optional new tools lose in the short term to established habits.

Only after a pilot shows 60%+ adoption and measurable output quality gains should the rollout widen to the next cohort. Widening before the pilot has stabilised typically repeats the problems at larger scale, and the second cohort sees the first cohort's ambivalence and mirrors it.

Measuring adoption and ROI

The metrics that matter in the first 12 weeks of an AI rollout are operational rather than strategic. Five are sufficient.

- Active users per week as a percentage of licensed users. This is the headline adoption metric. Target: 60%+ by week 6, 75%+ by week 12.

- Average time from task start to completion for AI-assisted tasks, compared against the pre-AI baseline for the same task. This is where productivity gains appear.

- Error rate in AI-assisted outputs versus non-AI outputs. Checks that speed has not come at the cost of quality.

- Employee self-reported confidence on a 1 to 5 scale, surveyed at weeks 2, 6, and 12. Rising confidence is the leading indicator of sustained adoption.

- Support ticket volume related to the tool. Rising tickets early (weeks 2 to 4) is healthy and indicates engagement. Rising tickets late (week 8 onwards) indicates a persistent problem that needs diagnosis.

Do not start with ROI measurement. ROI only follows sustained adoption. Measuring financial return in the first four weeks of a pilot produces misleading numbers that then get circulated to leadership and shape the wrong decisions. Once adoption is stable at 60%+ and confidence scores are rising, productivity measurements become meaningful and ROI calculations can be built from them with reasonable confidence.

Further reading and services

AI change management is where most UK AI deployments either take root or quietly fail. The difference between a tool that delivers productivity and a tool that sits licensed and unused is almost always the change management discipline wrapped around the rollout, not the tool itself. For structured change management support tailored to your team's tools, literacy levels, and existing workflows, see our AI implementation service and our AI for SMEs service. For more on the integration, measurement, and governance side of AI deployment, see the AI implementation section of the Knowledge Hub.

Frequently asked questions

- How long does it take for a UK SME to achieve sustained AI adoption?

- Typical UK SME rollouts reach sustained adoption (60%+ active weekly use) at 10 to 16 weeks from kickoff, assuming structured change management with a pilot phase, a champions layer, and weekly adoption monitoring. Rollouts without change management typically peak at week 4 and decline from there, with active use dropping to 15 to 30% by week 12. The difference is almost entirely process, not tool choice.

- What is the most common reason AI tools go unused after deployment?

- The single most common reason is that the deployment was announced and trained on but never embedded in a specific, high-value recurring task. Staff understood what the tool did in principle but had no concrete "starting Monday, use it for X" instruction, so the habit-forming first few weeks never happened. By week 4 the tool was competing against established habits and lost. The fix is to pick one narrow, high-frequency use case per role and make that the default starting point.

- Should we make AI tool use mandatory or voluntary?

- Neither absolute works. The pragmatic pattern for UK SMEs is to make specific use cases mandatory (draft first in Copilot before editing, summarise meetings via the tool, use the approved prompt for client emails) while leaving broader exploration voluntary. Mandatory general use breeds resentment and shallow engagement. Pure voluntary use reverts to the small enthusiast group within two months. Mandatory narrow use plus voluntary wide exploration gives you habit formation without backlash.

- How do we handle employees who are genuinely concerned about AI replacing their role?

- Address it directly and specifically. General reassurance ("AI will not replace jobs") lands as marketing language. Specific reassurance based on the actual roadmap lands as truth. Describe what the tool will do in the role, what it will not do, what parts of the role remain human-owned, and what reinvestment of time is planned. If the rollout does involve role changes or headcount reduction, say so plainly and on a timeline. Employees handle bad news they trust far better than optimistic ambiguity.

- What is a reasonable AI adoption rate to aim for at 3 months post-deployment?

- For a well-managed rollout of an embedded productivity tool (Copilot, Gemini), aim for 60%+ active weekly use at week 6 and 75%+ at week 12. For a workflow automation deployment where usage is tied to specific roles rather than every licensed user, adoption is measured by process coverage rather than individual use: 80%+ of the targeted processes running through the tool at week 12. For a customer-facing chatbot, the right metric is resolution rate and escalation rate, not employee use.