Data preparation for AI: the four-step process UK businesses need before deployment

Why data quality matters more than the AI model

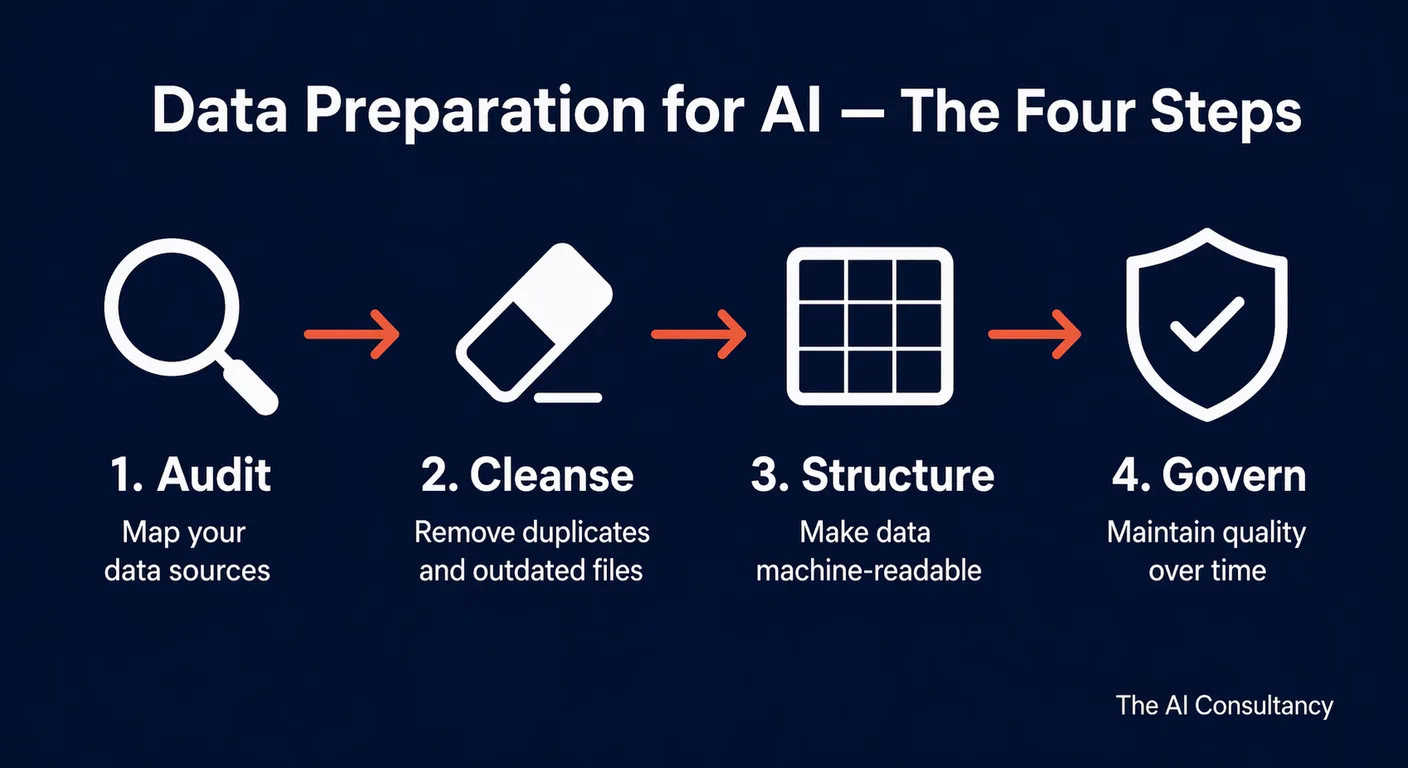

AI data preparation is the process of auditing, cleansing, structuring, and governing your organisation's data before it is ingested by, or used as the knowledge base for, an AI system. It is not a glamorous step, and it is the one most often skipped. The result is predictable: AI tools that hallucinate, contradict each other, cite outdated pricing, and erode the trust they were meant to build. Industry research published by OneAdvanced in 2026 suggests that 74% of organisations struggle to scale AI value, with data quality consistently cited as the primary obstacle. AI is not a magic reformatter of poor data. It amplifies the quality of what it receives.

Consider two UK businesses deploying the same retrieval-augmented generation (RAG) chatbot for customer service. Business A points it at a well-structured, up-to-date internal knowledge base with a clear document hierarchy, versioned policies, and a consistent naming convention. Business B points it at a shared drive accumulated over six years: duplicate policy versions, outdated pricing PDFs that were never archived, image-scanned documents that cannot be parsed, and operations notes that contradict the current service manual. Business A's chatbot answers around 80% of queries accurately and is quietly absorbed into the team's workflow. Business B's chatbot contradicts itself within a week, cites last year's pricing, and is switched off after two customer complaints. The model is identical. The chatbot code is identical. The data is the only variable.

The four steps below are not theoretical. They are the minimum required before any AI system that uses internal data goes live. The sequence matters: auditing before cleansing, cleansing before structuring, and structuring before governing. Skipping a step means revisiting it later, usually after an incident.

Step 1: Audit, what data do you have and where is it?

A data audit is a structured inventory of every source the AI system will draw on, together with an assessment of each source's recency, accuracy, format, volume, and access controls. It is the foundation of the other three steps, and it is usually the first place where the scale of the problem becomes visible.

Map every data source the AI will need. For a typical UK SME, the list usually includes the shared drive (Google Drive or SharePoint), the CRM (Salesforce, HubSpot, Dynamics), the finance system (Xero, Sage, QuickBooks), an email archive, the intranet, and a mix of PDFs and spreadsheets held in various folders. For a professional services firm, the list may extend to case management software, document management systems, and client-facing portals. For each source, answer five questions: when was it last updated, is this the current authoritative version, what format is the data in, how much of it is there, and who owns it?

The format question is often the most consequential. A 200-page manual scanned as an image PDF is invisible to most AI systems without OCR. An Excel file with merged cells, blank rows, and coloured highlighting as the only indicator of status is technically machine-readable but practically useless to an AI. A ten-year-old internal wiki with dead links and no version control contains information the AI will happily surface as current fact. Document these format issues now, because they determine which sources can be used at all and which need conversion before Step 3.

| Typical source | Common format issues | AI-readiness assessment |

|---|---|---|

| Shared drive (SharePoint, Google Drive) | Duplicate versions, inconsistent naming, mixed file types | Usable after deduplication and metadata tagging |

| CRM (Salesforce, HubSpot, Dynamics) | Free-text notes of variable quality, missing required fields | Structured fields are AI-ready; free-text needs review |

| Email archive | Personal data throughout, low signal to noise ratio | Generally exclude; extract specific threads only if essential |

| Intranet wiki | Dead links, outdated articles, no version control | Audit for currency before inclusion |

| Scanned PDFs | Not machine-readable without OCR | Convert to searchable text before inclusion |

For smaller data sets, a simple spreadsheet log built manually is sufficient. For larger environments, Google Drive's search and metadata view, SharePoint's content discovery reports, or a dedicated content inventory tool will do the job faster. The audit should produce one clear output: a ranked list of sources with a recommendation against each of use as is, clean and use, convert before use, or exclude.

Step 2: Cleanse, remove what the AI should not learn from

Cleansing is the process of removing, archiving, or excluding data that would degrade the AI's output if it were ingested. It is a targeted intervention based on the audit findings, not a full spring clean of the file system. The aim is to ensure that every document the AI sees is current, correct, and authorised for this use.

Four specific actions make up most cleansing work. First, deduplicate documents: if there are two versions of the same policy, one current and one superseded, archive the old version so the AI cannot retrieve it alongside the new one. Second, archive outdated content: pricing documents, rate cards, product specifications, and regulatory notices that are no longer accurate should be moved to an archive folder the AI cannot access. Third, remove documents that contain known errors that were never formally retracted. Fourth, flag documents that contain UK GDPR personal data and exclude them from AI ingestion unless a Data Protection Impact Assessment (DPIA) has been completed for that specific use.

For UK businesses with EU exposure, the EU AI Act's data governance requirements in Articles 10 and 53 set a useful benchmark even when they are not directly binding. Article 10 applies to high-risk AI systems and requires that training, validation, and testing data sets are relevant, representative, free from significant errors, and complete enough for their intended purpose. Article 53 extends related governance expectations to general-purpose AI. For most UK SMEs deploying an off-the-shelf AI tool with a RAG layer, neither article applies directly, but the standard is a useful checklist: is the data relevant to the task, does it represent the full scope of queries the AI will face, has it been checked for significant errors, and is it complete enough to answer the queries it will receive?

Cleansing also has a compliance output. A documented record of which data sources were excluded, why, and when, creates the evidence trail that UK GDPR and the EU AI Act expect. Keep this record. It is what you will show an auditor or a regulator if asked.

Step 3: Structure, make data machine-readable and logically organised

Structuring is the process of converting surviving data into a format the AI can parse efficiently, and organising it so retrieval produces the right document for the right query. Large language models perform best on clean, well-structured text with consistent naming, clear section breaks, and meaningful metadata. Unstructured dumps of legacy content produce unstructured, unreliable output.

Four structuring actions cover most of the work:

- Convert image-based PDFs to searchable PDFs or plain text. Adobe Acrobat handles small volumes. AWS Textract, Azure Form Recogniser, or Google Document AI handle larger batches and capture tables and forms more accurately.

- Establish a consistent document naming convention. Date, document type, subject, version. "2026-03-15_policy_acceptable-use_v3.docx" is machine-parsable in a way that "AUP final FINAL.docx" is not.

- Create a metadata layer. Document type, date, owner, topic tags, and intended audience. Metadata is what allows a RAG system to retrieve by category rather than only by keyword match.

- Break long documents into logical chunks. Sections of 500 to 1,000 words, broken at semantic boundaries rather than arbitrary character counts, produce better retrieval than feeding a single 200-page document whole to the model.

For RAG systems specifically, chunk size, overlap, and retrieval method all affect output quality in ways that are easy to underestimate. A chunk that is too small loses context. A chunk that is too large dilutes relevance. Overlap between chunks prevents a key sentence being split across two chunks and missed by retrieval. These parameters should be documented in the deployment specification, not left to the defaults of whichever RAG framework your developer picked first. Established practice in RAG implementation consistently flags knowledge-base quality as the biggest single factor in output accuracy, more important than the choice of model, the prompting strategy, or the embedding approach. For more on how structured data reduces hallucination risk, see our manager's guide to AI hallucinations and bias.

Step 4: Govern, maintain data quality over time

Governance is the ongoing discipline that keeps data preparation from being a one-off exercise that degrades within months. A data preparation project that is done once and then ignored creates a progressively less reliable AI system, because the business keeps producing new documents, updating policies, and superseding pricing, while the AI's knowledge base fossilises. Governance is the mechanism that keeps the knowledge base in step with the business.

Four governance requirements apply to any AI system using internal data. First, a named data owner for each source the AI uses. The owner is responsible for the accuracy and currency of that source and for approving its inclusion in the AI's retrieval set. Second, a defined review cycle: quarterly for high-change documents (pricing, product specifications, operational procedures), annually for stable policies (acceptable-use, IT security, HR handbook). Third, a process for archiving or removing documents when they are superseded, so the AI cannot retrieve the previous version once a new version is in place. Fourth, version control, so the AI always draws on the current authoritative version, with earlier versions preserved for audit but not for retrieval.

For UK GDPR compliance, governance extends to documenting which data sources contain personal data, confirming the lawful basis for processing that data through an AI system, and maintaining a current DPIA for any system that ingests personal data. The DPIA is not optional when AI is used for decisions affecting individuals, profiling, or large-scale processing of special category data. For most UK SMEs, the DPIA is a short internal document rather than a multi-week project, but it must be written, signed off, and reviewed annually.

The output of Step 4 is a living operational artefact, not a document filed once and forgotten: a data governance register that lists every source, its owner, its review date, its compliance status, and its inclusion status in the AI's retrieval set. This register is the thing that tells you, eighteen months after deployment, whether the AI is still reading from what you think it is reading from. When a regulator, auditor, or client asks for evidence of your AI data governance, this is the document you provide.

Further reading and services

Data preparation is the single highest-leverage activity in most UK AI deployments. It is also the one most often underfunded at the start of a project and most loudly regretted at the end. For a structured assessment of your current data readiness, governance posture, and AI deployment plan, see our AI readiness service. For implementation work on the tools and pipelines that sit on top of well-prepared data, see our AI implementation service. For more on the integration, measurement, and change-management side of AI deployment, see the AI implementation section of the Knowledge Hub.

Frequently asked questions

- How long does data preparation take before an AI deployment?

- For a typical UK SME with a single, focused use case, the audit and cleanse steps take two to four weeks of part-time work by one or two people. Structuring for a RAG system takes a further two to four weeks depending on document volume and format conversion needs. Governance is ongoing. For larger deployments across multiple departments, expect two to three months of preparation before go-live. Rushing preparation to start implementation faster is the single most common cause of AI projects missing their target outcomes.

- Does AI data preparation apply to RAG systems and fine-tuned models?

- Yes, to both, but in different ways. RAG systems rely on retrieval from your data at query time, so structure, freshness, and chunk design matter enormously. Fine-tuned models incorporate your data into the model's weights at training time, so accuracy, representativeness, and deduplication matter more than retrieval design. Fine-tuning is harder to correct after the fact, so preparation quality is even more critical. Most UK SMEs should use RAG before considering fine-tuning.

- What data should never be included in an AI knowledge base?

- Personal data subject to UK GDPR, unless a DPIA has been completed and the lawful basis is documented. Client-confidential information covered by NDA, unless the client has consented to its use with the specific AI tool. Superseded or archived versions of documents that have since been corrected. Content flagged as inaccurate but not yet updated. Special category data such as health, financial, or biometric records should generally be excluded except where a specific, narrow use case has been authorised with appropriate controls.

- Can we use AI to help with the data preparation process itself?

- In limited, supervised ways. AI tools are useful for suggesting consistent naming conventions, drafting metadata tags, summarising long documents into chunked sections, and flagging obvious duplicates. They are not reliable for deciding which documents are authoritative, which contain personal data, or which are superseded. Those decisions need a human with domain knowledge and the authority to make them. Treat AI as an assistant in preparation, not a replacement for the judgment of the data owner.

- What is a DPIA and when is it required for AI data use?

- A Data Protection Impact Assessment is a structured review, required under UK GDPR, of any processing that is likely to result in a high risk to individuals' rights and freedoms. For AI use, a DPIA is required when the system makes automated decisions with significant effects, profiles individuals at scale, processes special category data, or introduces a new technology to your processing activities. Most custom AI deployments, and many off-the-shelf ones with internal data, trigger the requirement. The ICO publishes a free DPIA template that most UK SMEs can adapt.