RAG for UK SMEs: a practical 2026 implementation guide

What is retrieval-augmented generation, in plain English?

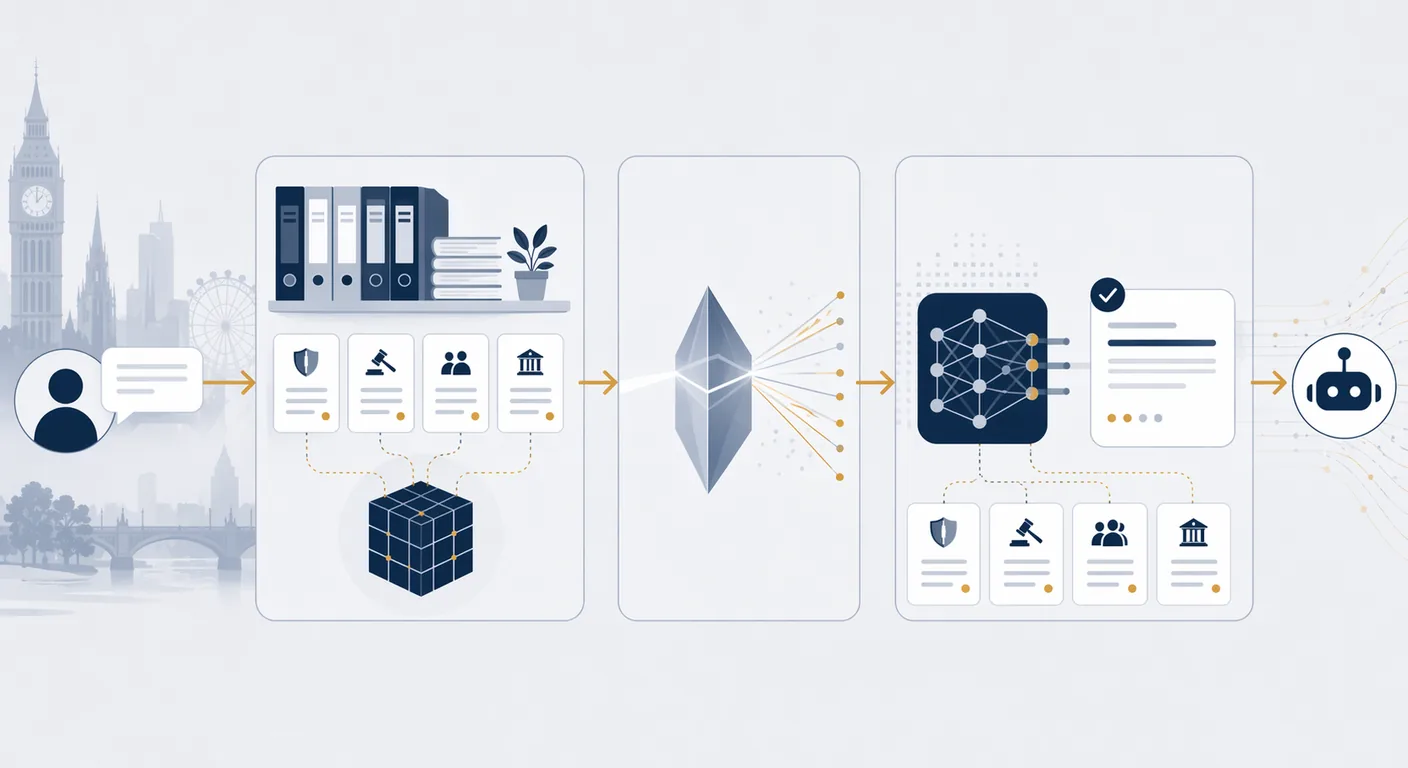

Retrieval-augmented generation (RAG) is a pattern where a large language model is given access to a business's own documents at query time, rather than having those documents baked into the model. When a user asks a question, the system first searches an indexed copy of the business's content, pulls the most relevant passages, and hands them to the model as context. The model answers using those passages and cites them. RAG is the default way UK SMEs give AI tools access to their policies, product information, standard operating procedures, and case notes without pushing that content to a third party's training corpus.

A 2025 benchmark from Arcee and others found that for most enterprise question-answering use cases, a well-built RAG system on a mid-sized model outperforms a larger model used without retrieval. Cost follows the same pattern: RAG on a capable Sonnet-class or GPT-4.1 mini-class model is typically cheaper per query than a frontier model used alone, often by a factor of three to five.

Why RAG and not fine-tuning or a long prompt?

Three techniques give a model access to your content: stuffing it into the prompt, fine-tuning the model on it, or retrieving it at query time with RAG. Each has a natural fit.

Prompt stuffing is right when the content is small, stable, and universally relevant (a style guide, a persona, a five-page policy). It breaks when the corpus grows past the context window or when different queries need different slices of the content. Fine-tuning is right when the task is about style, tone, or a narrow format the model does not produce natively, not about facts. Fine-tuning a model on facts typically underperforms RAG and is harder to update. RAG is right when the corpus is larger than a prompt can hold, changes over time, and is referenced selectively by different queries.

For most UK SMEs asking "how do we let our team ask our policies, product pages, and case notes?" the answer is RAG, not fine-tuning. Our AI tech stack guide for UK SMEs covers the broader tooling context.

UK GDPR and data residency considerations

Any RAG system built for a UK business handles personal data the moment a single document in the index names a person. That brings the entire pipeline (the vector store, the embedding model, the language model, and any logs) under UK GDPR. The Information Commissioner's Office guidance on generative AI, updated in 2024 and supplemented through 2025, sets out five expectations that apply directly to RAG: a lawful basis for processing, data minimisation at ingestion, transparency with data subjects, a documented retention policy, and appropriate controls on international transfers.

The practical implication is residency. If the embedding model, vector store, or language model sits outside the UK or adequate jurisdictions, an international transfer mechanism (UK International Data Transfer Agreement or the Addendum to the EU SCCs) is required, alongside a transfer risk assessment. Most enterprise plans from the major vendors offer UK or EU regional processing; the free and consumer tiers usually do not. A signed data processing agreement with a no-training clause is non-negotiable for any vendor in the pipeline.

For the wider posture around AI and data protection, see our AI acceptable use policy guide.

Choosing the embedding model

The embedding model is the component that converts each document chunk and each query into a vector so similarity search can work. The default UK SME choices in April 2026 are OpenAI text-embedding-3-large or text-embedding-3-small, Cohere embed-english-v3 or embed-multilingual-v3, Google Vertex text-embedding-005, and the open-source alternatives such as BGE-M3 or E5-large-v2 for self-hosted deployments.

Three criteria decide. First, residency: if the content is UK-only and you do not want it leaving UK or EU jurisdictions at any point, a self-hosted open model or a regional enterprise plan is mandatory. Second, multilingual coverage: if the corpus includes documents in more than one language, a multilingual embedding model is worth the small quality trade-off. Third, cost at scale: for a typical SME corpus of 50,000 to 500,000 chunks, any of the managed APIs produces an embedding bill in the £50 to £400 range for the initial index, with pennies per query thereafter; the dominant cost at scale is the language model, not the embeddings.

Vendor cost comparison (April 2026)

The table below compares four common paths to a working RAG system for a UK SME. Prices are taken from published pricing pages as listed in April 2026 and exclude the language model cost, which sits on top regardless of the retrieval backend. Refresh this table quarterly; vendor prices move, and so do their regional options.

| Option | Retrieval component | Typical cost (SME, April 2026) | UK / EU residency | Best for |

|---|---|---|---|---|

| Google Vertex AI Search | Managed search + vector | From around £1.50 per 1,000 queries, plus storage | Regional options available | Businesses already on Google Cloud |

| OpenAI Vector Store (file_search) | Managed vector store inside the Assistants API | Around £0.08 per GB per day of storage, plus query fees | EU data residency on enterprise plan | Teams standardised on OpenAI |

| Azure AI Search | Managed search with vector support | Basic tier from around £60 per month; standard tiers scale by replicas | UK South and UK West regions | Microsoft shops with strict UK residency |

| Self-hosted pgvector on Supabase or Cloud SQL | Postgres with vector extension | From around £20 per month at SME scale | Full control, UK region available | Teams with engineering capacity who want full data control |

The pattern we see most often at SME scale is pgvector on Supabase for the first pilot (cheap, UK-resident, no lock-in) and a migration to Azure AI Search or Vertex AI Search only if query volume and quality requirements justify the jump. Starting with a managed service is defensible too, but budget a month's migration work if the vendor's terms change.

A 6-week implementation plan

A realistic RAG pilot for a UK SME with a defined corpus (one or two document sources, a few hundred to a few thousand documents) runs in six weeks with a single developer and a part-time subject matter expert.

- Week 1: scope and source. Define two to three query types the pilot has to answer well. Identify the document sources, their current locations, and their owners. Draft the data classification rules: what is included, what is not, and why.

- Week 2: ingestion. Build the ingestion pipeline: fetch, clean, chunk (usually 400 to 800 tokens per chunk with 50 to 100 token overlap), embed, and store. Capture document metadata: source, owner, last-modified date, access level.

- Week 3: retrieval and prompting. Wire up the retrieval layer. Write the system prompt that instructs the model to answer only from retrieved passages and to cite sources. Add a "no answer" fallback so the model refuses when retrieval returns nothing relevant.

- Week 4: evaluation harness. Build a set of 50 to 100 held-out questions with correct answers and acceptable sources. Score the system's answers automatically where possible and manually where judgement is required. This is the step most teams skip and most teams regret skipping.

- Week 5: access and audit. Wire in authentication so each user only retrieves documents they are authorised to see. Enable query logging for audit and quality review. Add the UK GDPR-required transparency message where the interface is user-facing.

- Week 6: pilot and iterate. Deploy to a defined pilot group (typically 10 to 20 users), capture feedback, and iterate on chunking, prompting, and the "no answer" threshold. Do not scale beyond the pilot group until the evaluation harness shows stable quality for two consecutive weeks.

When NOT to use RAG

Three situations where RAG is the wrong default. First, if the use case is about style or format rather than facts, fine-tuning or a carefully written system prompt is usually better. Second, if the corpus is small and stable (under a few thousand tokens, changes rarely), simply pasting it into the prompt is cheaper, simpler, and more reliable than running a retrieval layer. Third, if the answer requires precise arithmetic, regulated calculations, or deterministic policy enforcement, a code-executing tool call (or a dedicated application with business logic) outperforms any retrieval-based approach and is auditable in a way LLM outputs are not.

A common anti-pattern is to build RAG over a set of documents that should really be a database. If your source of truth is structured data (product catalogue, prices, availability, transaction history), expose it via a tool the agent can call, not as text chunks to retrieve. Our guide to integrating AI with legacy systems covers the structured-data path in more detail.

How to evaluate a RAG system's quality

Quality in a RAG system is not a single number. Three measures together give a defensible picture, and a UK business should capture each before calling a pilot successful.

Retrieval quality. For each question in the evaluation set, did the retriever return at least one passage containing the correct answer? Measured as recall at k (typically k equals 5). A retriever at 85%+ recall at 5 is serviceable; under 70% and the language model cannot compensate regardless of how capable it is.

Answer faithfulness. Of the answers produced, how many are supported by the passages cited? Measured by sampling 50 to 100 answers and manually scoring each. A faithful answer may still be wrong if retrieval missed the right source, but an unfaithful answer (contradicts its own citations or fabricates a source) is the more serious failure and should drive system prompt or model changes.

Answer relevance. Does the answer address what the user actually asked? A faithful, correctly-retrieved answer can still miss the point if the model interpreted the question narrowly or broadly. This is where a business domain expert's judgement matters, and where an internal scoring rubric is more useful than any automated metric.

Teams that track all three over the six-week pilot usually identify the binding constraint early. Retrieval quality is most often improved by better chunking, re-ranking, or metadata filters. Answer faithfulness is improved by the system prompt and by switching to a more instruction-following model. Relevance is improved by conversational context handling and by giving the model room to ask clarifying questions when a query is ambiguous.

Security posture for a production RAG system

Four controls are non-negotiable for any UK business running RAG in production. Role-based access at retrieval time: each query should only return documents the user is permitted to see, enforced in the retrieval layer rather than the UI. Source tracking in every answer: the user sees which documents were consulted, which is both a UX improvement and a UK GDPR transparency control. Prompt injection defences: retrieved passages can contain adversarial instructions, so the system prompt must treat retrieved content as untrusted and ignore imperative instructions inside it. Query and output logging with defined retention: logs are part of the processing record and are subject to retention rules just like the source documents.

For sector-specific considerations, see our professional services industry page and our financial services industry page.

Where to start

Most UK SMEs should start with a narrow RAG pilot on a single, well-defined corpus (HR policies, product knowledge, a specific client engagement archive) before generalising. Six weeks to a working pilot is realistic; a further four weeks of iteration is typical before the system is production-ready. For structured readiness assessment before a build, see our AI readiness service. For delivery, see our AI implementation service. For broader guidance, the AI implementation section of the Knowledge Hub collects related articles on tooling, data preparation, and change management.

Frequently asked questions

- What is RAG in one sentence?

- RAG is a pattern where a large language model is given access to your own documents at query time: the system retrieves the most relevant passages, hands them to the model as context, and the model answers using those passages rather than its baked-in training data. It is the standard way UK SMEs let AI tools work with their internal content.

- How much does a RAG system cost to run?

- For a UK SME-scale corpus in April 2026, the retrieval infrastructure is usually the smaller cost. Self-hosted pgvector on Supabase or Cloud SQL starts around £20 per month. Managed options such as Azure AI Search, Google Vertex AI Search, and OpenAI Vector Store scale from around £60 per month upwards. The dominant ongoing cost is typically the language model itself, which depends on query volume and model choice.

- Does a RAG system keep our data in the UK?

- Only if you configure it that way. The vector store, the embedding model, and the language model can each sit in different regions. For UK-only residency, choose a UK region on Azure AI Search (UK South or UK West), a UK or EU region on Google Vertex, or a self-hosted stack on a UK-resident host. A data processing agreement with a no-training clause from every vendor in the pipeline is required, along with a documented international transfer mechanism if any component sits outside adequate jurisdictions.

- How long does it take to build a production RAG system?

- A working pilot on a defined corpus usually takes six weeks with one developer and a part-time subject matter expert. A further four weeks of evaluation and iteration is typical before the system is production-ready. Teams that skip the evaluation harness (week 4) usually spend that time later in rework when early users hit quality problems.

- What data works best in a RAG system?

- Text content that is reasonably well-structured and kept up to date: policies, product documentation, standard operating procedures, support articles, case study write-ups, and internal wikis. RAG struggles with heavy tabular data (use a database and tool calls instead), with scanned PDFs that have not been OCR'd, and with heterogeneous archives where the same question has contradictory answers across documents. Clean the source before you index it; quality at ingestion is the single biggest lever on answer quality.

- When is RAG the wrong choice?

- When the task is about style or format rather than facts (fine-tuning or prompting wins), when the corpus is small and stable enough to fit in a prompt (prompt stuffing wins), or when the answer needs precise arithmetic or deterministic policy enforcement (a dedicated application with business logic wins). RAG is also the wrong default when your source of truth is structured data; expose that data as a tool the AI can call rather than as text to retrieve.